CloudAMQP never had a traditional monolithic setup. It’s built from scratch on small, independent, manageable services that communicate with each other. These services are all highly decoupled and focused on their task. This article gives an overview and a deeper insight to the automated process behind CloudAMQP. The article describes some of our microservices and how we are using RabbitMQ as message broker when communicating between services.

Background of CloudAMQP

A few years ago Carl Hörberg (CEO @CloudAMQP) saw the need of a hosted RabbitMQ solution. At the time he was working at a consultancy company where he was using RabbitMQ in combination with Heroku and AppHarbor. He was looking for a hosted RabbitMQ solution himself, but he could not find any. Short after he started CloudAMQP, which entered the market in 2012.

The idea was to make it possible for anyone to get a running RabbitMQ cluster with just a click of a button.

The automated process behind CloudAMQP

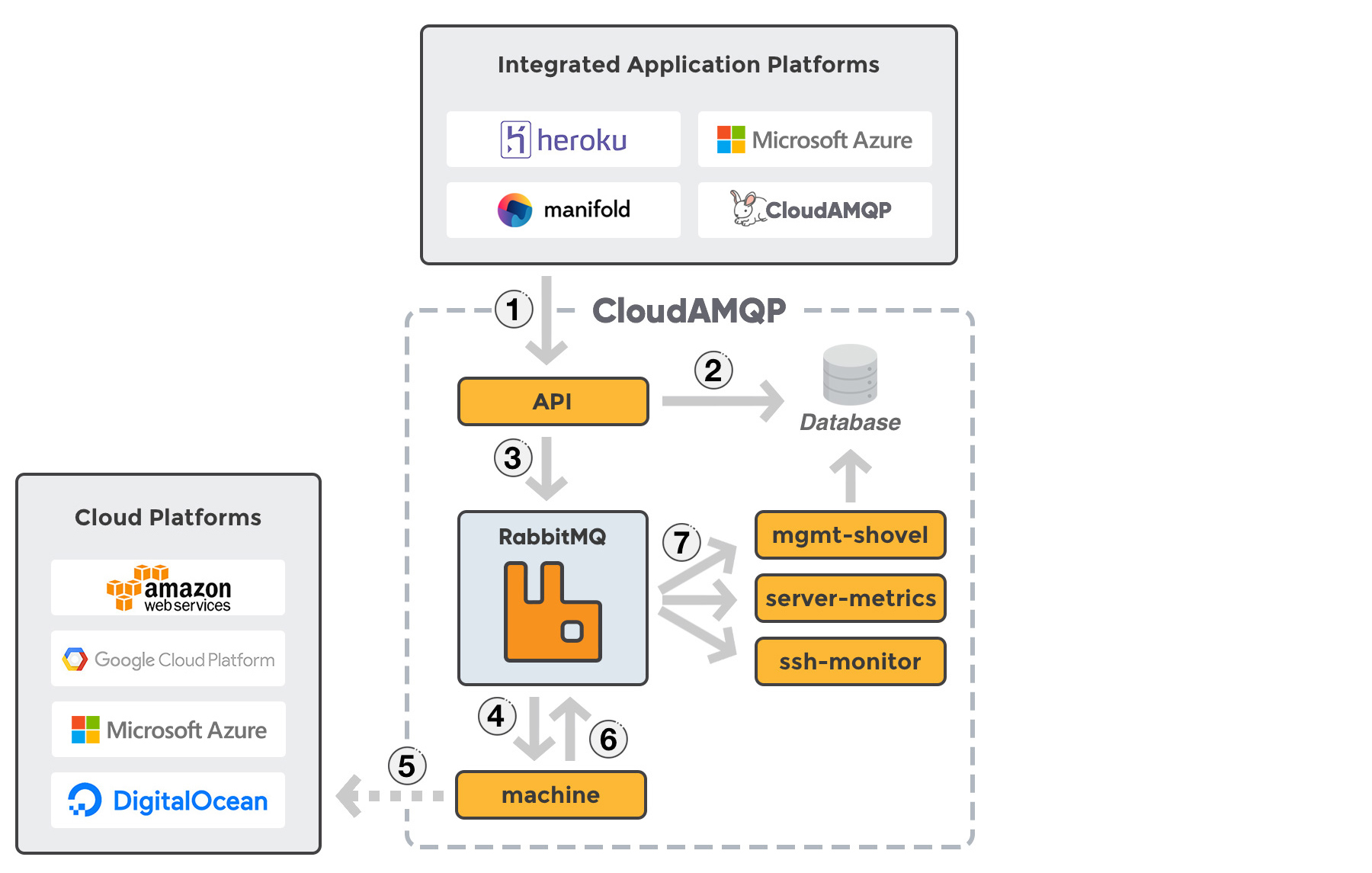

CloudAMQP is built upon multiple small microservices, where we use RabbitMQ as our messaging system. RabbitMQ is responsible for distributing events to the services that listen for them. We have the option to send a message without having to know if another service is able to handle it immediately or not. Messages can wait until the responsible service is ready. A service publishing a message does not need know anything about the inner workings of the services that will process that message. We follow the pub-sub (publish-subscribe) pattern and we rely on retry upon failure.

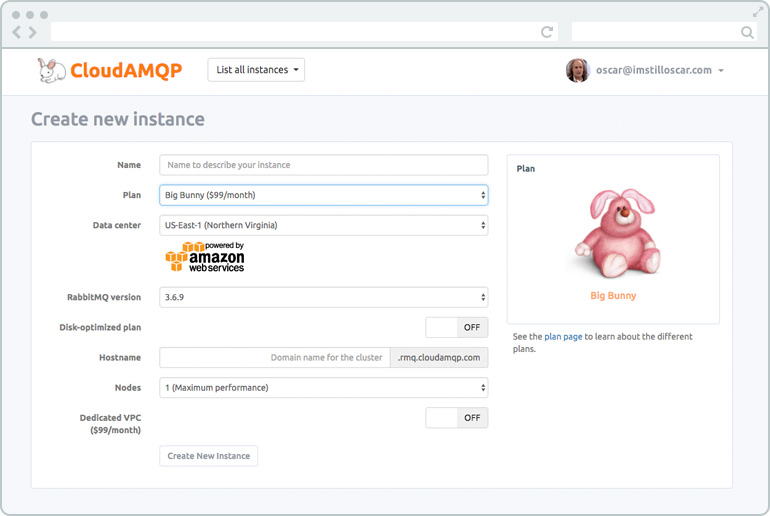

When you create a CloudAMQP instance you get the option to choose a plan and how many nodes you would like to have. The cluster will behave a bit different depending on the cluster setup. You also get the option to create your instance in a dedicated VPC and select RabbitMQ version.

A dedicated RabbitMQ instance can be created via the CloudAMQP control panel, or by adding the CloudAMQP add-on from any of our integrated platforms, Heroku, Microsoft Azure Marketplace, or Manifold.

When a client creates a new dedicated instance, an HTTP request is sent from the reseller to a service called CloudAMQP-API (1). The HTTP request includes all information specified by the client: plan, server name, data center, region, number of nodes etc. as you see in the picture above. CloudAMQP-API will handle the request, save information into a database (2), and finally, send a account.create-message to one of our RabbitMQ-clusters (3).

Another service, called CloudAMQP-machine, is subscribing to account.create. CloudAMQP-machine takes the account.create-message of the queue and performs actions for the new account (4).

CloudAMQP-machine trigger a whole lot of scripts and processes. First, it creates the new server(s) in the chosen datacenter via an HTTP request (5). We use different underlying instance types depending on the data center, plan and number of nodes. CloudAMQP-machine is responsible for all configuration of the server, setting up RabbitMQ, mirror nodes, handle clustering for RabbitMQ etc etc, all depending on number of nodes and chosen datacenter.

CloudAMQP-machine send a account.created-message back to the RabbitMQ cluster when the cluster is created and configured. The message is sent on the topic exchange (6). The great thing about the topic exchange is that a whole lot of services can subscribe to the event. We have a few services that are listening to account.created-messages (7). Those services will all set up a connection to the new server. Here are three examples of services that are receiving the message and starts to work towards the new servers.

CloudAMQP-server-metrics: continuously gathering server metrics, such as CPU and disk space, from all servers.

CloudAMQP-mgmt-shovel: continuously ask the new cluster about RabbitMQ specific data, such as queue length, via the RabbitMQ management HTTP API.

CloudAMQP-SSH-monitor: is responsible for monitoring a number of processes that need to be running on all servers.

CloudAMQP-server-metrics

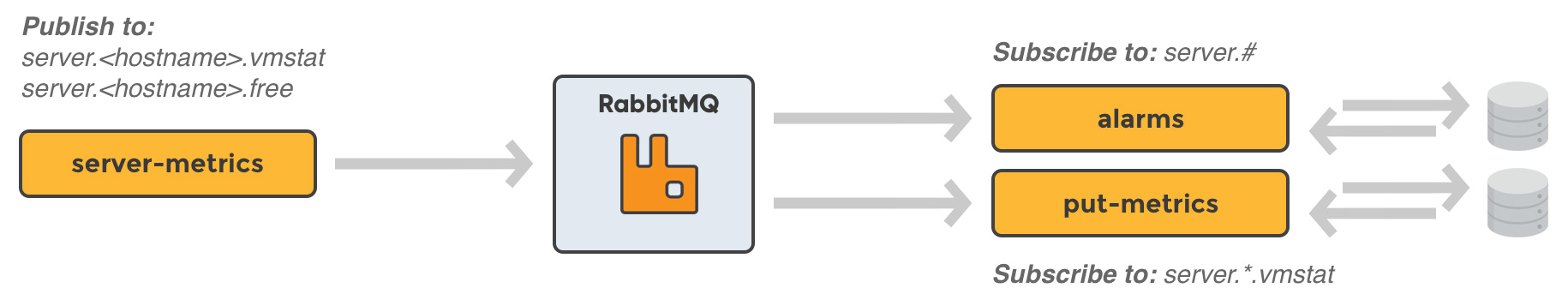

We do have a lot of other services communicating with the services described above and with the new server(s) created for the client. As said before, CloudAMQP-server-metrics is collecting metrics (CPU/memory/disk data) for ALL running servers. The collected metric data from a server is sent to RabbitMQ queues defined for the specific server e.g. server.<hostname>.vmstat and server.<hostname>.free where hostname is the name of the server.

CloudAMQP-alarm

We have different services that are subscribing to this data. One of these services is called CloudAMQP-alarm. A user is able to enable/disable CPU and memory alarms for an instance. CloudAMQP-alarm check the server metrics subscribed from RabbitMQ against the alarm thresholds for the given server and it notifies the owner of the server if needed.

CloudAMQP-put-metrics

CloudAMQP is integrated with monitoring tools; DataDog, Logentries, AWS Cloudwatch, and Librato. A user who has an instance up and running have the option to enable these integrations. Our microservice CloudAMQP-put-metrics checks the server metrics subscribed from RabbitMQ, and is responsible for sending metrics data to tools that the client has integrated with.

Why we use message queues (RabbitMQ)

All small micro services in CloudAMQP are developed and deployed independently of one another. We think that several smaller services reduce overall complexity in a project, even though some overhead is added due to all messages we are sending. We use message queues to achieve decoupling and it helps us keep the architecture flexible. If a new bug is detected in the service called CloudAMQP-machine (the service that creates and configure a new server), the service can be stopped, the bug fixed and then restarted again. The queue of account.create-messages will pile up a bit, but no data will ever get lost. CloudAMQP-machine will be able to handle the creation of the instance when the bug is fixed. Our messages are not allowed to disappear and each message should be handled only once. Each service can be deployed, tweaked, and then redeployed independently without compromising the integrity of an application.

We can do changes as long as our API remains intact. If we want to use a different database backend or structure, we are good to go. We just need to replace that part instead of walking through the whole project looking for references and dependencies to the old service.

Today is most of our code (microservices) developed in Ruby where we use the Bunny client library to communicate with RabbitMQ. We do also have a few services written in GO, Erlang, javascript and Scala. One of the great things with message queues is that it always gives us the option to do a new service in a completely different language, all depending on what we think is best suited for the new feature.

This is just an overview of a how we are using a few of our micro services in combination with RabbitMQ. Hope you enjoyed reading about it and let us know if you have any questions!

Do you have a story to tell?

If you have a story to tell about a project where you are using RabbitMQ - please get in contact with me, I would love to hear it!

Jeff Hara

Customer Success Manager

Hi, I'm Jeff, and I'm here to help

Curious about what CloudAMQP can do in your architecture?

Reach out to us and let us help. The consultation is free, and we'll ensure you get in touch with one of our experienced architects. / Jeff

Contact us today