"The SQL Server was used to persist data between the steps of the ETL (Extract, Transform and Load) process. We didn't have queue's. Everything was made by java process that were scheduled by windows. This architecture would not scale."

When part of the CloudAMQP team were in Rio De Janeiro, we were given the opportunity to meet them, and ask questions about their solution and how they were using RabbitMQ.

Background

Cortex Intelligence is a company working with Big Data Solutions. They are building a platform that brings together all relevant data sources. With their web and mobile application, Big Picture, customers can easily subscribe to, and build their own dashboards that combine relevant external and internal data feed into a total view of their business.

The amount of relevant data grows in volume, different formats and sources.

In the Data Store they can find i.e. product prices, economic indicators, specific market statistics, news, reports, and Facebook, Twitter, and YouTube pages. Cortex Intelligence are using techniques and tools to transform raw data into meaningful and useful information for their customers.

Architecture

We had the pleasure to meet some people from the developer team and we had a longer conversation with Roberto and Rodrigo about their usage of CloudAMQP. The main questions were; How did they build their BI solution and what technics were they using? How were they using RabbitMQ? Why did they chose CloudAMQP?

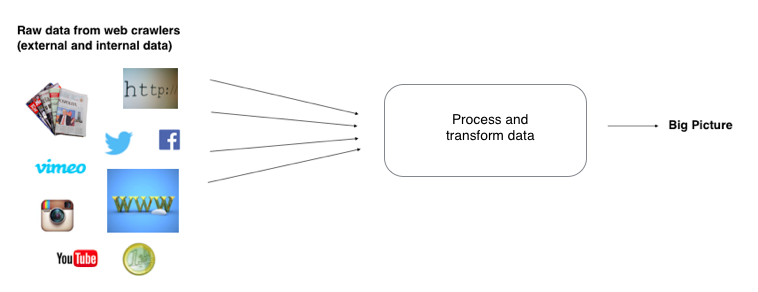

Cortex intelligence are sending out web crawlers (web spiders) which systematically browses and extracts data from Internet and internal networks. The framework used when extracting data is called Scrapy and it's an open source framework written in Python. These web crawlers takes a copy off all the pages they are visiting and adds them into a working pipeline for later processing. The data is collected, processed, and stored/forwarded with help of a software service called SpringXD that is constructing streams of the data. SpringXD’s stream and batch workflow let you build pipelines where you can consume data from various endpoints.

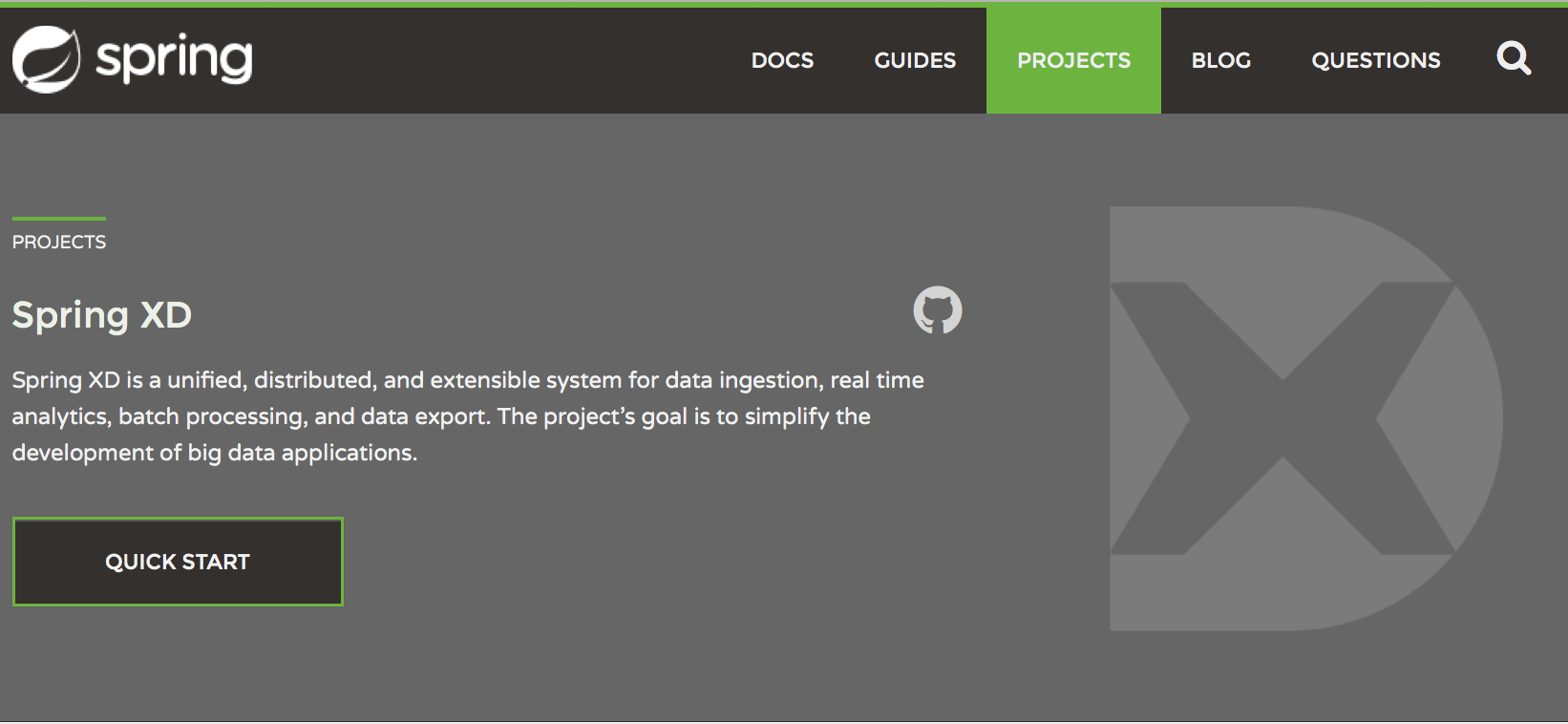

SpringXD

SpringXD is a powerful set of services that work with a variety of datasources. SpringXDs goal is to simplify the development of big data applications.

The service can be used to abstract the mechanisms behind ingestion, processing, and depositing of data - it’s useful when you need to collect, process and export data in real time. SpringXD helps connecting all your data sources, applications and analytics environments in a manageable way. It can handle a large amount of information, while still keeping operational performance at high levels.

When the information has been collected by the web crawlers, it is sent into the SpringXD pipeline where it's processed and transformed into a predefined structure (json for example).

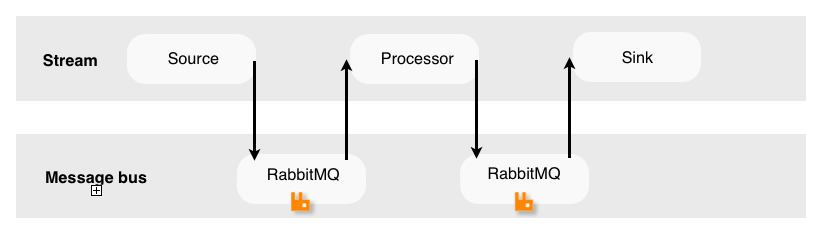

SpringXD allows you to build streams. A stream consists of a source and a sink and in between processing steps. It comes by default with numerous Source and Sink streams that can be be linked together to make it easier to modify data between systems. The ingestion step is called a "Source", a processor is called a "Transform", and a deposit is called a "Sink". An example of a Source is a twitter stream (a source that ingests data from Twitter) and an example of a sink is a log (the log sink uses the application logger to output the data for inspection). The data is in between pumped through a message bus. The pipe itself is typically backed by a distributed transport protocol and in Cortex Intelligence case, RabbitMQ.

RabbitMQ and CloudAMQP

If the information collected fell into the customer chosen subjects, the collected data were shown for them in there dashboards in Big Picture. Cortex Intelligences were dividing different kind of Datasets into different queues, and by doing that, they could also easily identify the information that were hard to handle. Information that became a problem for them.

"Via CloudAMPQ we could see when the Memory/CPU usage had spikes and we had alarm that were triggered when the usage was going over 90%. Consume rate of the queues were also important for us to view via the RabbitMQ management interface"

All kind of generated errors were also added to RabbitMQ for later processing. Losing information were not an option for Cortex Intelligence. After the processing of the data, all information were saved into there DynamoDB and shown for the customers.

Cortex intelligence have multiple servers running today (Rethinkdb, postgresql etc.) and they had been using RabbitMQ in multiple projects before they decided to go with RabbitMQ in this project. The reason they choose a managed solution this time was part wise the financial costs. Their requirement was that the server had to be up and running all the time and if something happened, it had to be handled straight away. They needed someone to help handle unexpected situations and help with monitoring of the RabbitMQ instance. Cortex intelligence liked the price model and the total service CloudAMQP offered.

"We were a small team and we had a lot of work to do, the Cloud was the best option - because we didn't have time to manage everything by ourselves. It is also really nice to know that there is a team ensuring that our RabbitMQ server is up and running, even when we have a lot to deal with, like millions of items being generated by our crawlers."

It’s always great fun to meet up with clients of CloudAMQP - thanks again Cortex intelligence for a great time and hope we meet again!

Please email us at contact@cloudamqp.com if you have any suggestions, questions or feedback.

Jeff Hara

Customer Success Manager

Hi, I'm Jeff, and I'm here to help

Curious about what CloudAMQP can do in your architecture?

Reach out to us and let us help. The consultation is free, and we'll ensure you get in touch with one of our experienced architects. / Jeff

Contact us today