In this talk from RabbitMQ Summit 2019 we listen to Will Hoy and David Liu from Bloomberg.

Today, Bloomberg's engineering teams around the globe have access to a fully-managed RabbitMQ platform. This enables them to achieve scalability, flexibility, and maintainability, without needing to focus on the RabbitMQ Server details. We’ll see how one such team has used the platform to build a system at scale, capable of servicing billions of data requests each day.

Short biography

Will Hoy is a long standing member of Bloomberg's Engineering department, having worked in multiple financial data application teams, he now heads up the RabbitMQ infrastructure team.

David Liu is a software engineer on a Derivatives Market Data team at Bloomberg L.P. Every day, his team's services process millions of mission-critical requests from dozens of enterprise clients. RabbitMQ is the foundation for the microservice architecture David is building to support exponentially growing demand. Prior to joining Bloomberg, David graduated summa cum laude from Princeton University with degrees in Computer Science and Statistics & Machine Learning.

Keynote: Growing a Farm of Rabbits To Scale Financial Apps

My name is Will Hoy and I work at Bloomberg. I am joined here by my colleague David Liu and we’re very excited to be presenting today’s keynote–the story of how we grew a farm of rabbits. I’m going to start with that story and David will tell you of how we built an application on top of this infrastructure.

Bloomberg Technology by the Numbers

As you know, Bloomberg is a technology company that specializes in financial data and news. Our global clients are financial professionals, and decision makers that care about getting data factually with as little latency as possible. To facilitate this, we have 5000+ software engineers that basically function in two camps. The Application Developers develop applications for the terminal and the Infrastructure Developers create building blocks that Application Developers can use. There are a lot of data that come into our system, all day and every day. Billions of data pieces of middleware are used extensively and some of those are queues.

Growing a Farm of Rabbits - Will Hoy

I’m going to tell a story of how we took RabbitMQ usage from a handful of teams at Bloomberg to hundreds of teams. I’m going to talk about the goal–the evolution of our setup, and then finish with a summary of the challenges that we faced.

As already stated, we provide messaging middleware as a service. This is the goal of our team and Application Developers really don’t have all the time. They want to build and focus on the business logic for those applications. Although, I don’t think I’ve had any problem setting up a RabbitMQ broker as well as configuring it properly, it’s more about the time that they use to do this. So, they can use our system and they don’t have to focus on all of the nitty-gritty details of setting up the RabbitMQ broker.

Some Requirements that make it Hard(er)

You may be thinking that is it really that hard to set up? In fact it’s easy, and all you need to do in order to setup a broker is probably one line. But, it might not do all of the things that you need it to do. So, at Bloomberg we have certain requirements that make it slightly harder. Obviously, you want it to be highly availability and reliable message delivery. No one wants the messages to go missing. We also have environmental requirements. So, every machine in Bloomberg needs to reboot on a regular schedule. This means bringing down everything on a particular node and then bringing it back up again.

We also want to keep disaster scenarios in mind. We want to be able to survive and still be in business if we would lose an entire datacenter. And if we would lose an entire datacenter, we still want to be able to keep that reboot schedule that I talked about earlier.

(Some More) Requirements and Expectations

It turns out that our Application Developers have little expectations from us as well. So, we can pull a few rabbits out of the hat to deliver some of these.

Evolution of Our Setup

It turns out that we were late to the party in terms of RabbitMQ usage. At Bloomberg, there were already some teams that were using RabbitMQ in different setups.

‘Wild’ RabbitMQ Broker Instances

First of all we have the co-located RabbitMQ, where you have the broker and the application running on the same machine. You also have the case where everyone is using the default Vhosts because you know if you have a default Vhost, why do you need to create anymore? And, then there is the case where we do use different Vhosts for each application. The one consistent thing with all of these cases was that the teams were very happy to give up ownership of their RabbitMQ clusters and pass on the maintenance burden to our team.

Requirements - RabbitMQ Setup

Taking that into account with earlier requirements, we wanted to have clustered nodes. If we were to reboot one node, we’re not out of business. We want to make sure that the queues are available from every node. So, we have this ha-mode: all policy to achieve that since reliable message delivery isn’t something that you can just have on the server side. You need to be able to do things in the Client, and for that you need to switch on settings to achieve that. Also, we want to have dedicated clusters, so no more of this co-locating the application with the broker.

The Line between Application and Infrastructure

Taking all that into account, we needed to figure out the line of responsibility between infrastructure and the application. We do that on Vhost where on the right of the line we have the topology and the client API, where we let applications pick what all API they use. Obviously they are in the best place to figure out the required topology, queues exchanges, bindings, etc.

To the left of that, we’re managing the broker, the Vhost and all the policies that go with that Vhost. We also look it down as well. We own the admin for all of the clusters and don’t give admin away for any of our clients. In an enterprise system, you need to have an audit trail for changes. Changes don’t magically happen on production and no one knows why, who or how.

RabbitMQ Broker Instance

In Broker Instance, we have RabbitMQ on the left and you’ll notice that we have more than one Vhost in this broker. On the right, we have configuration files that basically define what should be on any given node. These also define the Vhosts, any plugins that we have, what version of the book should be running, and the entire configuration for that broker.

We use orchestration to make sure that what’s reflected in the configuration is actually what is there and monitored because you need to be able to see what’s going on. Inside these policies we quote things like connection, memory and queues. This is our way of containing how much any Vhost can take up. At this stage, we came across our first set of challenges with RabbitMQ.

Challenges with RabbitMQ

The first challenge that came up earlier in the discussion deals with disconnects. It might be fairly obvious that if you get disconnected and if your application is using RabbitMQ you need to reconnect. As I mentioned, we run clusters where if one of the nodes goes down, you’re going to get disconnected. It’s important to understand that if your application reconnects it doesn’t mean that we have an outage. It just means that one of the nodes decided to disconnect you for whatever reason. So, you should reconnect and end up at one of the other available nodes.

It’s also important for your application to re-declare any topology that it had relied on to do something. This was a bit of a ‘gotcha’ for us because if you see the small print for this highly available (HA) sync mode automatic, you’ll notice that it’s not for free. When a node comes up and needs to be synchronized, it’s going to lock-up that queue on the entire cluster. Most of the time good clients will keep those queues for a minimum size. This is not noticeable in the normal case, but when you have all the messages in the queue and that node is brought up.

Resource leaks. So it wasn’t uncommon for us to have a node in a cluster where the memory skyrocketed off the moon. This happened almost on a weekly basis. And then we would have to bounce that node. We developed a script called the Hammer which we hammered down that node. We have some other scripts as well, which I’ll talk to you about later. So, how do we deal with those challenges /disconnects? Because it’s a corner case application and developers generally do not test for that. We made it happen a lot more frequently for them in the development environment. We made disconnects more than a daily appearance and this really trained their application code to reconnect each time. We were in a good position to be able to do that. These last two were really the issues that we dealt with through upgrades. So, we knew we needed to upgrade because these problems were fixed.

Architecture Evolution and Self-Service

What used to happen, one message at a time, can be done in batches now. For weeks this was a bug which was fixed. At this stage of evolution, we had tamed the rabbits that we had in the wild and we knew what we wanted to run with going forward. But, it was painful as those configuration files that I’ve talked about were manual. Hence, when onboarding a new client we would have had to make some manual changes on all of them, which was quite slow. We spent a long time building self-service systems where Application Developers can come along and they can click to register a Vhost name and then they get a quota for free. They get an endpoint that they can connect to and they can go off and develop the application in whichever tier they need to.

This is when we came across, Mike hinted at earlier the next challenge with rabbit which was that one does not simply upgrade the RabbitMQ. So, it’s been earlier talked about and there are three options that you have.

Upgrade Options

Full stop. This is basically an outage when you bring down all the nodes. You used to have them up in the reserve order, you don’t anymore. But, this is an outage and we expect Application Developers to write applications that run 24/7. If the infrastructure doesn’t run round-the-clock, they can’t do that right? So, that’s not an option for us.

For the rolling upgrade this is something that has become more feasible, but you can’t do it all the time. You can’t rely on it all the time because there are backwards incompatibilities every now and again and you’ll have some Erlang issue as well.

Blue Green, Good in Theory

Blue Green is where it’s at and if we see there are the steps that you have. This was good; however, it didn’t work for us. This is due to the aspect of migrating consumers over. Before we load up our brokers, we have multiple Vhosts running on a single broker, which means that multiple teams are using that one broker. So, being able to synchronize all of those teams and get all those consumers migrated across to a new cluster all at the same time isn’t something that we can do.

Proxy-Fronted Brokers

We needed a solution for that problem and we built our own using a proxy. We built a proxy that understands the AMQP protocol. Using the protocol we fished out the Vhost which is the juicy piece of information. Using the proxy we can say App A should go to Broker A and App B should go to Broker B. We can drive that proxy for whatever mapping we want for those Vhosts. This is good because before you would upgrade your broker in one shot with all the Vhosts all at the same time, which is quite risky really. In this way, you can upgrade Vhost by Vhost and can manage that risk a little bit more easily.

It is easier to do load balancing as well so if one broker is running particularly hot you can move some more Vhosts over to another broker. It also enables you to lock down the broker. The only thing that needs to connect to it now is the proxy so you don’t have to expose each broker to your clients anymore.

Vhost Migration in Action

But, there’s some downside as there’s an extra point of failure and there’s additional latency. However, these are just small prices to pay. So, it turns out we’ve got node balancing. Let’s see how it works. We’ve got this application that’s in Broker A and we want to move that across to Broker B. How do we do that? Firstly, we use some orchestration by creating the Vhost. This is just registering the name space and putting these policies that are there that I talked about earlier. Hence, we are ready to move over everything to Broker B. Here’s where we’re going to do something weird like freezing the Vhost so this is freezing all the data flow between the application and the broker. Even if we disconnected the application, we’ve already trained them to reconnect again and again. So, we don’t want that and we only want to freeze them and prevent them from making any changes to the state.

After this we can safely transfer over the topology and then shove all the messages across. Now, we can update the mapping and disconnect. Upon reconnecting, the application now in Broker B can be cleaned up due to any state that we had in Broker A. This is all and it’s very similar to the Blue Green operation. In case these disconnect, the whole transition of moving the Vhost over takes less than a second. So, it’s not a huge price to pay for that application. It could be optimized as well if we had more details about the consumer and publisher connections.

Rampant Growth

We’re now at this stage where we solved a lot of our problems and we had more Vhosts. There was a lot of Vhost growth (the same joke that we had earlier)! We grew like rabbits in the wild. If you have Self-Serve at No Cost you gets Lots of Vhosts. With more Vhost, it means more brokers and as the song goes – more brokers, more problems.

Challenges with RabbitMQ

Network Partition Strategies

This is more up-to-date with where we are today. Network Partition Strategies essentially means you operate on the scale that we operate, and you don’t want any one single event to knock-out or affect multiple brokers all at once. If that event happens such as a Network Partition, you need an automated strategy to deal with that. RabbitMQ offers such a strategy and ideally we would want to pick the pause-minority strategy since we care about consistency and we don’t want to lose any messages.

Unfortunately, for us we had a lot of problems with pause-minority. We couldn’t get consistent behavior; we think it’s down to rebooting our nodes. We tried to change the make-up of the cluster using the “forget node” to try and paper over that, but we couldn’t get consistent results. So, we had to develop our own solution.

Queue Misbehavior

Every now and again you do get queues that don’t behave the way you would expect. In this case, we have a queue that has zero consumers. But, those are actually consumers connected to it. For these types of problems, there are two things that you need. First you need detection to catch the problem: the first problem, and then some kind of remediation to deal with it. It’s generally something that you would need and you want your clients to know that they have a queue here. It’s got messages coming into it, but those messages are being consumed. So, you have some kind of standing queue.

Alarms go off and we see this problem! We use a snippet tool – snipper, zapper, sinker, and the hammer as well to deal with these types of problems and you have to know which tool to use to do the fix.

Truly achieving reliable message delivery

This is the big one. Truly achieving reliable message delivery. Typically what happens is that you have clients coming to you and saying, “So I used RabbitMQ and it lost my messages”. RabbitMQ gets a really bad name because Application Developers come along and they pick-up RabbitMQ for the first-time. They use one of the clients and reliable message delivery isn’t on by default. So, they end up losing messages and they don’t have various settings switched on unless you could go in and turn on tracing to figure out exactly where the messages are getting lost. We have a checklist for our clients and we go through this checklist with them, which nine times out of ten will remediate the problem.

The Client Checklist

- Producer Confirms

- Consumer Acknowledgements, After Processing

- Dealing with Dead Connections - Heartbeats, Reconnect Logic

- Using a Dead Letter Exchange (DLX) to catch Unrouteable Messages

Producer Confirms is important because once you send that message to the broker it has to go round with the classic queue case. All of the nodes of that cluster must work well before you get that confirmation. Let’s say you get the confirmed back and during disaster scenarios your message is still on the broker and you are safe.

Dealing with Dead Connections is something that happens quite often. It’s a case where you often have a consumer task and it’s getting messages from a broker. If it doesn’t get messages from a broker, it’s got my business. Unfortunately, you can lose the connection between the broker and that task. If that happens, it’s just sitting there stuck, waiting for something to happen. It doesn’t necessarily always trigger an exception in the client API. So, using something like Heartbeats will protect against that case.

Also, we saw the case where you can publish to an exchange and that exchange has nowhere to put the message. You get pretty good performance if you do it. But, if you do care about these messages, you can have a DLX – a Dead Letter Exchange to catch those. Reliable message delivery is not turned on by default and this is one of the biggest problems that we see, again and again.

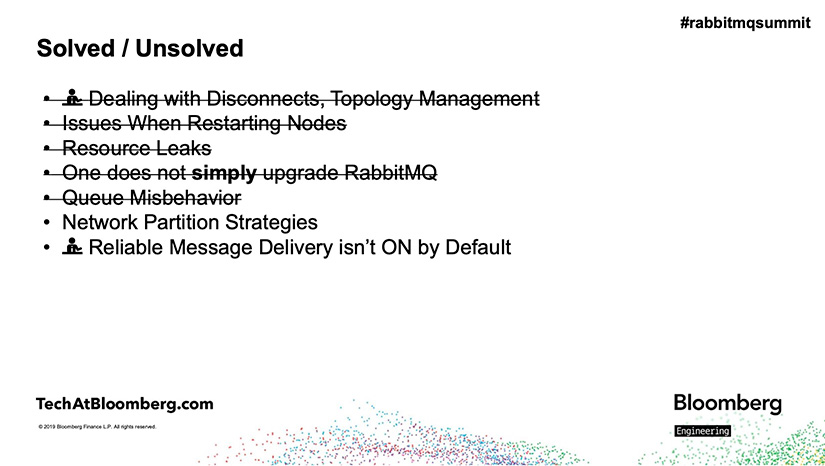

Summary of Challenges with RabbitMQ

We started with the challenges such as dealing with disconnects, Topology Management, node restarting issues, resource leaks, Queue Misbehavior and more, and we’re now left with Network Partition Strategies and Reliable Message Delivery that isn’t by default. A lot of these were fixed by the community by upgrading. So, it is very important to go to that next version and 3.8 looks like it’s got a lot of good features to be able to get rid of those.

We might not need Network Partition Strategies anymore if we got Quorum Queues. We’ve got Reliable Message Delivery though we need to do some work with the clients (as already said before in the panel). It would be great if we prioritized Reliable Message Delivery over performance by default. Then more application developers would probably lose fewer messages.

Using RabbitMQ to Scale Financial Applications

by David Liu

So, how did we build a scalable financial application on top of this infrastructure? I work in the Derivatives Market Data Team at Bloomberg. In fact, you must already know that the derivative’s market has grown very rapidly in the last 20 years. So, in the graph below, on the right hand-side you’re looking at the market size overtime and you can see that in recent years the market has grown into well over 500 trillion dollars. To give you some perspective into what this number indicates, the graph on the left-hand side shows this value compared to some well-known market sizes such as the U.S stock market.

As the engineering team, we’re now posed with the problem of how do we build a system that is capable of handling billions of requests every single day?

Why RabbitMQ: Simplify Routing

On top of that we also have a second problem. We’re dealing with some pretty complex data. So, the first reason is that the data is really complex because we’re a centralized market data provider. Whenever we turn data from a lot of different areas like inflation, credit default swaps, and foreign exchange, each one of these has its own nuances and intricacies. The second reason why the data is very complex because it is multi-dimensional. So, in the images below you can see examples of what I’m talking about like an interest rate yield curve or even a three-dimensional volatility queue, if you combine both of these factors together you arrive at my team’s overall mission which is to honor a high volume of market data requests in a reliable manner.

It’s with that mindset that we began our RabbitMQ journey. I’m now going to walk you through the process of adopting RabbitMQ, why we chose it, and how we addressed one core problem in particular, which is: complex routing. So, what is this complex routing problem? The first thing to know is that while my team owns one market data service, it is actually deployed to a lot of clusters. There are several reasons for this. The first is that given that we expect billions of requests every single day, it makes sense we wouldn’t want to put all of our eggs in one basket or cluster in this case. The second is that there are security and privacy policies in place that prevent certain market data requests from intertwining with each other.

So, given with both of these we came up with a setup where each cluster is roughly responsible for any given financial data works stream. Naturally, the challenge that arises then is that each application needs to control where its market data request is routed to. When my team arrived at this problem a few years ago, we found this network particularly difficult for three main properties – the network was granular, large and flexible. So, let me get into what I mean by each of these terms.

Granular – Starting with granular, it’s not a one-to-one mapping between each of these applications and one of our clusters. In reality, each application needs to have control over every single request and where it’s routed to. This is happening at a millisecond level.

Large - The second feature that made it difficult is that this network is very large. So, I only have four applications shown here. In reality, there are thousands of applications running at Bloomberg, connected to our hundreds of service deployments. You can imagine that this grows very quickly.

Flexible - This network is constantly changing. Just like a service provider, we’re changing our deployment, and so is the application’s team. Hence, on any given day, this network changes and we need to be able to adapt to that.

So, in summary the solution we find for this complex routing problem needs to be able to handle all of these three properties. It’s for this reason we arrived at RabbitMQ. So, with RabbitMQ we were still able to preserve the complex routing happening behind-the-scenes and we could really focus on the part that was actually relevant for us – the endpoints.

Let’s say an application would like to send two market data requests to our service. The only thing that it needs to do is populate the RabbitMQ with the appropriate Service ID. Once it does that, our topic exchange handles the rest. So, let’s say Application 1 would like to send to our Blue cluster deployment. It populates the routing key. That message is sent to the topic exchange and routed appropriately. For the Red message to our Red cluster, same deal: populate the routing key and everything else falls into place accordingly.

Let’s say someday, in the future, we would like to bring up a new Purple deployment. Well, the application can follow the very same logic - populate the key and everything else is the same. Use the same connection to the exchange and the message simply arrives at the intended destination. This was really useful to us because we were now focusing on the relevant parts – the Producer where we populate the message body, and the Consumer where we declare the queue. Everything else in between is handled for us. We don’t have to worry about it.

Routing Success Story

If we fast-forward to today, my team has found RabbitMQ to be a huge success story. So, looking at the graph shown here, you’re seeing the number of messages we publish over every single week. In recent months, this value has reached well over 200 million messages every single week and at peak times this reaches to tens and thousands of messages in just one second.

This leads to the second topic on how did we scale RabbitMQ out-of-the-box? To do that, I’m going to focus on one particular aspect of our architecture. In the diagram, you saw our original setup before, which were applications, directly connecting to the Vhosts. While this was enough in the beginning as our team grew and the number of applications calling us also increased, later we ran into a few problems.

Issue 1: Losing Control of Connections

The first was that we just lost control of the connections made to the Vhost ad this manifested into two primary ways. The first was that we lost control of the types of connections. So, many of you probably know that there are permanent and temporary connections. When we directly exposed the Vhost, the applications were free to make any number of temporary connections to our system. This would immediately lead to some pretty wasteful connection churn and undermine our performance.

The second way this issue manifested was that we were vulnerable to exceeding our policy quotas. As already stated, every Vhost has a policy that includes a quota on the number of connections. By exposing the Vhost, we made ourselves vulnerable to violating this policy as shown here.

Issue 2: Excess Information Exposure

The second issue that we ran into was exposing a lot of information to the client. Again, as already discussed earlier, in order to contact the Vhost, the application needs to use a certain kind of API client library. In our case, our client library contains a lot of details like how do we populate the message, how do we handle Acknowledgements (to be discussed later). But, right now, what's important is that this ultimately hindered both sides from the caller point-of-view. Now, their development was tied to our library rollout and from our service point-of-view. They made it harder to make changes to the list library and we were also revealing a lot of details that should have been hidden from our callers.

Message Flow

So, with these two issues in mind, we added a new component to our infrastructure - a gateway. The gateway is the only component connected to our RabbitMQ setup. How these all come together? Just as before, when an application is sending a market data request to our system what first happens is that the message is sent to the Gateway which forwards it to the exchange. The exchange routes based upon the routing queue. Now, our message is sitting on a queue dedicated to the work stream. An available consumer, can then take up that request and generate the market data to populate a response.

That response containing the data is sent back to the same exchange placed onto a private response queue and then the data is returned to the caller who can proceed with its high-level business logic. So, this is how all the pieces come together and the overview of our system.

Scenario: Scaling Gateway from Throughput

Now I’m going to go into the details of how we scale this system up and in particular I’ll focus on the individual components and leverage a few examples from our financial domain. So, the first relevant example from the financial domain is to take a look at the kind of traffic my team receives.

The graph here depicts the number of requests we receive over the course of a typical day. It really stands out how spikey traffic is. In particular you can see a huge spike at around 5 pm. This could be for a lot of different reasons. Maybe one of our callers is running a huge batch job after the stock market closes. Many different reasons here, but from our perspective one key challenge we need to take on is how do we take in as many messages as possible before dropping them, the primary way we tackle this is by scaling the number of gateways connected to our exchange.

Well, one gateway can surely handle a lot of Market Data Requests and ingest them, but it has its limits. So, by scaling the number of gateways we have the flexibility to adapt to different throughput needs.

The second financial scenario that is relevant here is that again given that we’re a centralized provider, we are returning data to a lot of different teams. Some might be running highly interactive UI applications and others are running longer back-end batch jobs. So, to drive that point even further the graph here shows the average response time we give to our different clients at a higher-level. In order to honor these different SLA’s the primary way we do this is by scaling the number of consumers dedicated to that work stream. In this way, we can drastically reduce the latency for any given client.

So this might be a simple technical idea, from a business perspective. However, this offers enormous value because we can now adapt to each new client’s individual SLA and needs.

Message Reliability & Tradeoffs

If we take a step back, you’ve seen why my team chose RabbitMQ to handle a complex routing problem and how we scaled it up to handle the unique financial scenarios.

I’m now going to conclude with the final topic of Message Reliability. In particular, I’m going to draw on one point from the Client Checklist, which is Consumer Acknowledgement. While many of you already know how this works, I’ll walk through a brief example of how Acknowledgements are used in our system. So, as with before, an application is sending a request to our service. Everything in the beginning is the same. Just like before, the message is sent to the gateway exchange, routed, and it’s sitting on the queue. But, now when an available consumer takes up that message, we don’t actually acknowledge it immediately - it’s still sitting there.

The Plan Backfires: Poisonous Messages

So, in the detrimental event that our consumer crashes while processing the message maybe the data is not in the database, or message is constructed improperly. Well, even if it does crash, our request is still sitting on the queue and the next available consumer can take it up.

Let’s say in this example, we’re successful. We generate a message and only then do we return the response to the exchange, acknowledge, and then the application has its data. It’s unaware that all this is happening in the background. It can just simply count on reliable market data. As with any simple solution, there is the opportunity for a backfire and in our case we have the pretty infamous one – the Poisonous Message.

For those who are fortunate enough to not have to deal with Poisonous Messages, let’s take a look at how a single Poisonous Message can take down our system. Well, this Poisonous Message is sitting on our queue, arrives at our service and in the process of processing that request, we crash! Again, there’s a corrupt request or there can be any number of reasons.

But, now the interesting part is that the logic that we put in place to provide reliability is now undermining our entire system. Because that request is now repeatedly sent to all of the available consumers and quite quickly just one out of billions of our Market Data Request Managers to take down all of the consumers. For a team that promises 24/7 high-availability to all of our callers, this was really scary and unacceptable.

Possible Solutions

In a response we considered several solutions. The first is just to “nack” or not acknowledge all of the redelivered messages with the redelivery flag on. This would have handled a lot of our cases like the Poisonous Message just (shown here) would have been tackled. But, in our Bloomberg use case, we have seen that there are actually a lot of scenarios where it is somewhat reasonable for a message to be delivered. Maybe the machine was being turned down (ridiculously) during the first-time of being processed. So, we are also aware that obviously in 3.8 there is Quorum Queues and for our interest there is a redelivery account in these Quorum Queues.

This would be really useful, but of course as you’ve seen already there are a lot of challenges in our upgrade process. So, we went with the third route, which was essentially to take matters into our own hands and implement the redelivered account ourselves. So, let’s see how we did that.

To implement the redelivery account we added yet another component which is a Redis cache. So, when any message arrives at our queue, now it reaches one of our service consumers. The first thing it does after reaching the service is to just look at the headers, extract the unique message ID, and then contact the cache and place that unique ID in the cache along with the count saying how many times have we seen this and a TTL for some bookkeeping purposes.

In the event that this is a Poisonous Message and we do crash while processing it, we still have that information on hand. With our acknowledgement logic when it’s redelivered and sent to our second instance it does the same thing – looks at the headers and updates the cache with the count.

Fortunately when our message arrives at the service for the third time, we’re now prepared. So, when it arrives our service goes to the cache and says, “Hey we’ve exceeded a certain threshold”! With this knowledge in hand, the service can safely acknowledge the request before processing it. Thus this harmful message is removed from the queue and our remaining service instances are preserved. They can handle all the subsequent market data requests while sitting on the queue.

Takeaways for Application Developers

- Scalability - Over the course of our RabbitMQ journey of solving the routing problem, scaling it and adding acknowledgements, the first takeaway we have is that RabbitMQ is very scalable for our Bloomberg use case. You saw it tackle a very large routing web as well as handling a billion per day throughput.

- Flexibility – We could configure the topology to our business needs and place acknowledgement logic that met our liability requirements.

- Reliability and Maintainability – It’s very well maintained, especially given that we’re operating on top of a very well-groomed infrastructure and our application is highly-available for our market data callers.

[Applause]