RabbitMQ Summit 2018 was a one day conference which brought light to RabbitMQ from a number of angles. Among others, keynote speaker Gavin M Roy cover AMQP in the context of its use in RabbitMQ.

In this talk Gavin will cover AMQP in the context of its use in RabbitMQ with a premise that if you don't fully understand AMQP, you don't understand RabbitMQ. Gavin will discuss the by-directional RPC behaviour, connections, channels, objects, methods, and properties.

Keynote: Idiomatic RabbitMQ

Good morning. I am really excited to be here at the very first RabbitMQ Summit and the first speaker, a lot of pressure.

The journey for me with RabbitMQ started longer than I care to admit. I was an early adopter, fleeing from another message bus technology and trying to stay within the open source space. In that, I was forced to learn a lot of things really early that have helped me develop application architectures that use RabbitMQ that hopefully most of you wouldn't have to think about these days.

To grok RabbitMQ is to know AMQP 0-9-1

One of the very thing early things that came to me was that to truly grok RabbitMQ, just at the deepest level, is to know AMQP. Specifically, these days, 0-9-1. Back then, 0-8. That's kind of a weird concept. When you think about how you use other technologies, database servers, web servers, we generally don't think about the low-level protocol or the model implemented in the protocol.

Hopefully, if you're a web developer, you're thinking about HTTP and using the constructs in it but you're not thinking about it at, say, the layer 7 framing of how it works on the TCP stack. To some degree, that knowledge with Rabbit is truly helpful.

Beginner Questions

When you think about, as you go through the lifecycle of being a developer who's using RabbitMQ, you start off with these questions of “How do I take a message and put it in a queue? Wait, what's an exchange again? Wait, I've got to publish to an exchange and then it gets into a queue. How does it get into the queue? Oh, a routing key. Okay.” You start working through your mind this idea of the model, of how a message flows through the system to get to where you have it in a queue and then how do you get it out of the queue.

Next Level Questions

Then, you're writing your application. You're working through this process and you start to run into issues. How many of you have applications in production where there are behaviors that you didn't expect in your application? That's it?

RabbitMQ’s Identity

A lot of times the problems that you encounter in your application where the behaviors are not what you're expecting is because there are some things around the AMQP protocol, at the lowest level that RabbitMQ implements, that maybe aren't as straightforward as you would expect when you have experience working with other remote systems. That's primarily because RabbitMQ’s identity has evolved and is focused on this standard called AMQP.

AMQP itself is a standard that is a low-level protocol. That's like the framing on the wire, how data gets marshalled and unmarshalled. It's also the classes and methods around how that low-level data says what it's doing or what it wants. And then, it's the model of the data as it exists in RabbitMQ.

Advanced Message Queueing Model

If you bear with me here, we're going to we're going to dive a little deep and then we're going to come back out. If I'm going too deep for this early in the morning, I apologize, but I promise you it's actually really early where I'm from and I'm usually not awake for another five hours so bear with me.

At the highest level you have the model. This is the easiest part to understand but these are the beginning questions. We have exchanges. Really, when it comes down to it, exchanges are all about routing. You have the message queue that accumulates things. And then you have the binding.

One of the things that I always have fun when I'm working with a junior developer and I'm explaining this for the first time and I tell him about routing keys and then I tell them about binding keys. They ask me, “What's the difference between a routing key and a binding key?” Well, they're the same thing, kind of. It depends on the exchange.

Advanced Message Queueing Protocol

And then we have the protocol. It's really the foundation of how everything in RabbitMQ talks - everything. When things are happening inside RabbitMQ, you're using any of the functionality. For example, let's say Shovels or you're using any of the protocol adapters, which I'll get to, you're still talking AMQP 0-9-1. It just happens to be doing so internally as opposed to on the wire.

Over on the right side here, we see basically the state that every connection has to go through when it's establishing a conversation with RabbitMQ. The connection starts. We basically send a salvo saying, “Hey, we want to talk to you in AMQP” and the server says, “Okay, let's start the connection.” It sends this response back. And then, we have to say, “Okay, we got your response saying you want to start a connection.”

We're going to skip over secure because that's really not implemented most of the time.

We want to tune the connection. How many channels can we have? What's our maximum frame size? A few other things. And then, as a response, we say, “Okay. We got your tuning request. Here's what we would rather do.” And the server responds with, “Okay. Your connection is open.” And we say, “Okay. We understand. It's open.” Great, pretty chatty. That's one of the reasons why short-lived connections to RabbitMQ are inefficient because that's a quite a few frames to go back and forth before you can ever do anything. And then, the next step is you have to open a channel.

One of the things that you'll notice, when we were going through that diagram, is that we had this prefix called Connection. And then we had, on the right side, Open, OpenOk, Tune, TuneOk.

AMQP Classes and Methods

Connection, in the AMQP protocol is a class. The open and open okay are methods. The protocol basically calls out for these different top-level classes/connections.

One of the interesting things, it's a bi-directional RPC protocol. We have something that's kind of weird in the way that it was written for a normal developer. If you think about how you would have a channel and method, you're going to get a response back. But because the way the framing works, your responses actually come in the way of method calls as responses with arguments. For example, the server sends a Connection.Start - connection being class, start being the method. And we're going to respond with a method call of Connection.StartOk. And we're going to pass arguments along with that. It's a little counterintuitive from that perspective.

What you'll basically see, as you go through here, and I tried to highlight some of the important methods for these different classes is that almost all of them have a, basically, verb “okay” response method to them with some exceptions - some important exceptions.

When you think through the lifecycle of what happens at the low level, when you're establishing a connection to RabbitMQ, you're going to basically establish that open connection. And then, you're going to open a channel. And then once you've opened the channel, then you're going to do all the work. You want to maybe declare an exchange, declare a queue. Then, you're going to bind the queue to an exchange. These have these nice call responses call-to-call type of behaviors until you get to the basic class.

I don't mean to be disparaging in any way but I feel like that was a little lazy catch-all class name because it basically revolves around everything that doesn't have something to do with these top-level model objects when you're publishing messages, consuming messages, getting messages. Maybe it would’ve been better called message. I don't know.

Anyway, one of the interesting things about basic calls is basic.publish, on its own, inherently, the thing that you use to send the message doesn't get a response.

Finally, one really important thing that any programming RPC-style behavior has are exceptions. And so, exceptions, in the way of the AMQP protocol, come as arguments that are passed by way of a Channel.Close method call. That's a really important thing to understand.

When it comes down to it, channels, in essence, primarily are for the coordination of what messages are being sent across the bus when I'm at the message layer and letting your client know when it's done something wrong. You tried to access a queue that doesn't exist, you published a message and you required that Rabbit route it somewhere and it failed to do so. And so, that really is what channels are about. It's important to understand what that is.

Oftentimes, a beginner mistake might be, “Well, I'm going to have this application that publishes messages from all these different parts so I need to have a channel for each different part.” That's actually costly when you understand what's going on underneath the covers in RabbitMQ Q because those are different objects with statistics associated with them. It's keeping track of the message rate. It's keeping track of how many bytes are passing through. And it's useful, for example. if you want to coordinate behaviors because you want to know that this part of the application is causing exceptions within the RPC. But, when you can, try and keep those things small.

AMQP Framing

This is where we dive deep. We’ll go at this pretty quick. On the wire, at layer 7 - everybody understand what I'm talking about layer 7? I'm talking about basically, in TCP/IP, in the space that the TCP frame gives us to transmit data, we have binary data that is basically formatted like this. Pretty much, every single frame that happens looks like this. And always, there are exceptions but, in essence, we have a frame header with the frame type. This number that basically says, “How am I going to format the payload?” Then, you're going to have the channel number, and the number of bytes inside the payload, and an end-frame delimiter. This is somewhat opaque in that, from a low-level perspective, what we're doing in all client libraries, in essence, is we're looking for this part, and we're looking for this part, and then we're taking that and passing it to a parser to try and figure out what's next.

Message Publishing Anatomy

Next, we have what goes in that that frame part I was talking about. I wanted to break down what happens when you publish a message, when you think about communicating. So, for example, when we when we make an analogue to HTTP and we're making a post call. At the top part of the post call, on the wire, we're going to have headers that say, “We're going to post this. And it's coming from here. And here are how many bytes are there.” And then we have this, basically, opaque payload.

AMQP’s a little different. We basically have a minimum of three frames. Remember, a little earlier, when I was talking about the connection-state negotiation, one of the things that we negotiate is how big or how many bytes can each frame be. The default is basically 128K.

We send this frame that basically says, “We're doing a basic publish.” And then, we send information about what we're going to publish, that's where the message properties live, and information about the payload. And so, if you're sending a 1 megabyte-payload, you're going to send multiple of these body frames with opaque chunks.

Basic.Publish Frame

The method frame - the very first frame, the Basic.Publish, keeps our class, our method. And then, the first argument is the exchange that we're going to publish to. Now, this could be empty if you want to use the implicit direct publishing to a queue behavior or it could be your exchange name, and then the routing key value, and then the mandatory flag. Every message that's published has that.

Content Header Frame

And then, every message that's published has this. This is the content header frame. You'll see, there's a pattern here. We have these containers that then break down to another container, that then break down to another container.

In this case, we're saying, “We're a content header frame on channel number 1, and we have 45 bytes worth of data. And within that, we're keeping track of the body size. And then this awesome number here, there's a little algorithm with bit packing to determine what fields, out of all of the message properties, get carried along. So, content type, app-id, the timestamp, keep it in memory, base delivery mode.

Body Frame(s)

Finally, we have the body frame. Notice, this is a different frame type 3. And, on channel 1. We have 55 bytes worth of data. And, in this case, we have a JSON payload.

The JSON payload is opaque. The actual message body that goes into RabbitMQ is opaque but it's the only thing about your message that's opaque to RabbitMQ, that it doesn't interrogate when your message comes in. When you're publishing to RabbitMQ, it actually is looking at, for example, the content headers and decodes the AMQP frame every time.

How many of you have heard the advice or general philosophy that headers are slow - the AMQP message property headers? Only a few of you. That's cool.

It actually can be true. As a generalization, it's not. But one of the reasons why it can be slow is, basically, for every message that you have, you have this arbitrary key value table that is getting decoded every time a message comes into RabbitMQ. If it's too big, it basically needs to allocate more memory. So, not only does it have the binary payload that comes in, but then it has to basically deserialize all that wired data into objects that it uses.

The interesting thing is it's not the headers exchange that's slow but merely the act of decoding the frame if you have too many values in the header fields themselves. That's it. That was the low-level stuff. Did we go too low?

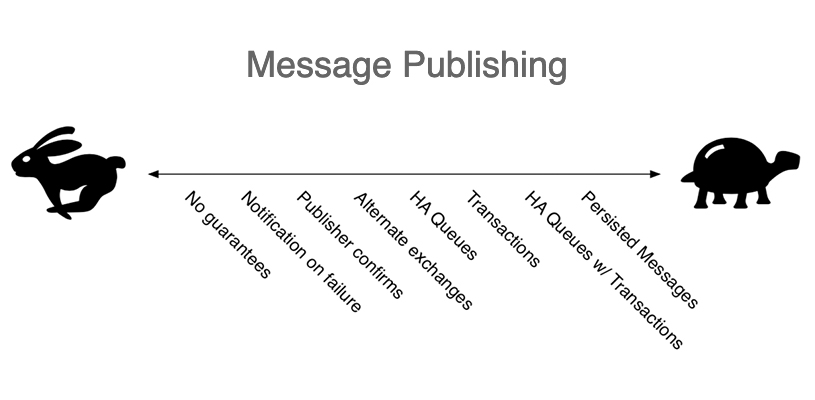

Message Publishing

Message publishing. It's interesting when you think about how do you answer questions about RabbitMQ. You may answer them at a high level but really what you're doing is you're answering questions about the protocol or the model.

For example, on this graph, we talk about how do we publish messages as quickly as possible? At the fastest end, there's no guarantees in message publishing. The reason why that's fast is it's fire-and-forget. We're just sending those three-plus frames for every message as quickly as we can. And if something happens to it, in RabbitMQ, that's great. If nothing happens, I don't care.

For me, in the ways that I use RabbitMQ, that doesn't work for our business. I would imagine that for most of you that's the case as well.

Next, as we go down here, you could see - as we get to safer and safer guarantees around message delivery, it gets slower. So then, you have to think about, “Why is that?” And so, when we think about notification on failure. What does that mean? It means that we're telling Rabbit, “Hey, we want you to take this message and then tell us if you couldn't route it.” If, basically, the routing key that we provided you in combination with the exchange left it in a state where you couldn't put it into a queue.

And then, we have publisher confirmations. Publisher confirmations are awesome. They're very lightweight compared to all the other alternatives which is to say that when you send those three-plus frames to publish a message, RabbitMQ is going to acknowledge the fact that you received that message. if you're a fast publisher, it'll acknowledge the fact that you sent that message or maybe multiple messages.

But, really, when it comes down to it, the notification on failure is going to come in the way of a channel close RPC. Publisher confirmations are going to come in the way of a basic ACK RPC.

Alternate exchanges are not part of the AMQP protocol but are an extension to them but put in place by Rabbit. Basically, what it's going to do is, in the case of failure to route a message to what you've said to route it to, it'll put it somewhere else.

HA queues or mirrored queues. They're slower but they're only slower because that message is coming in and it has to replicate out across all the RabbitMQ nodes in the cluster that you've defined from a number of replicas that you want in the queue. Think of it like a multi-leader replication in a database. It's got to arbitrate, “I've received this message. I know it's in all the queue that it's supposed to be in. I've published it out. I know it's been removed.” That's what makes it slower.

Transactions, so that's the TX class within AMQP. That's pretty slow just like with databases. In fact, this is a database transaction with commit and rollback. I would advise you just steer clear of it. They implement it. It's in the spec. But there are other ways to get at that.

So then, when you start combining things like HA queues with transactions, obviously, these are additive in the operations that they're performing at the protocol level or at the cluster level, so that's why they slow down.

Finally, persisted messages. Now, I could have persisted messages with no guarantees but they're still slower. That's because it's writing it to disk.

If you think about what RabbitMQ is doing underneath the covers, it basically has a database. And if, in that same analog, every queue is a table and you're appending rows to those tables and removing rows from those tables when you're dequeuing.

And so, if you have delivery mode 2 where you're persisting messages. Now, we have to write it to disk, fsync, and wait for the file system to say, “Yes, I've written that message to disk” which obviously is slower, which means that you need to have hardware that can support the I/O that you want to put onto disk.

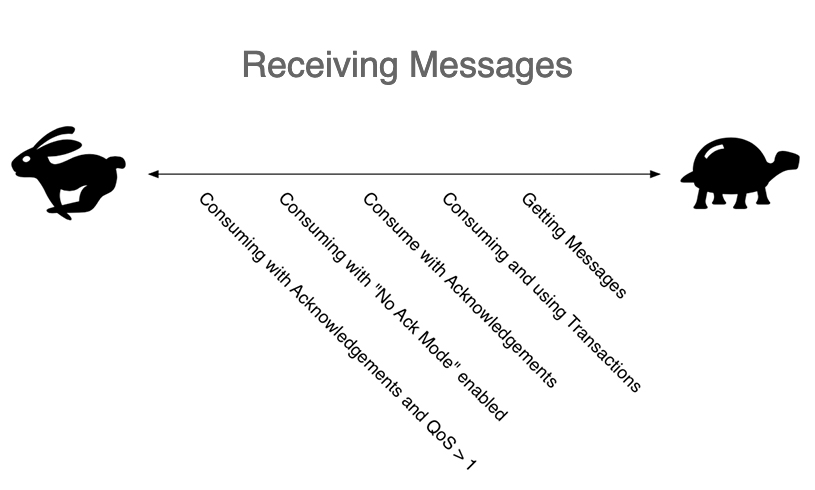

Receiving Messages

Often times, it's easy to get into something like a message bus and not think of the ramifications of those things. Of course, you want message guarantees. Of course, you don't want to lose anything because you're using RabbitMQ as a central part of whatever application you're writing. It's mission-critical or it's important to your business at the very least and you don't want to lose anything. But finding the right balance of message guarantees is really important because overdoing it can lead you down the road to, “Why is this so slow?”

Likewise, we have the same types of questions about receiving messages. Generally, on the performance side, we have the same type of concept. What's the fastest way? How do I get my throughput to be really high for this application? Again, it comes down to the protocol and the things that we're asking of the protocol.

For example, at the fastest level, what you want to do is you want to consume messages. The reason why you want to consume messages as opposed, to at the very other side of here, of getting messages is because of the protocol. Consuming messages takes your application and it says, “Hey, RabbitMQ, send me messages as quickly as you can after I ideally acknowledge that I've received them, and processed them, and told you can remove it from the queue.”

And so, that, from an operational perspective, looks like RabbitMQ saying, “Hey, here's three plus frames for this message. You’re application processing them and sending a basic acknowledge, a basic act, back to RabbitMQ, or a basic reject or an Ack back to RabbitMQ saying, “Hey, we're cool. Send me the next message.”

As opposed to getting messages which is a basic get method that is saying, “Hey, RabbitMQ, do you have any messages in your queue for me?” Rabbit’s going to say, “Hey, okay, let me go look.” It can't do any optimization around trying to figure out how to send those messages to you as quickly as possible. You're just asking, “Give me one message.” And then, it's going to send it to you in a response. And then, you're going to process it. And then, you still have to Ack it. It's not a very efficient way to get those messages.

So, we look over here at QoS>1. QoS prefetch is basically telling Rabbit how quickly can this one connection, this one channel process messages. And based upon that, Rabbit’s going to try and optimize to pre-allocate, out of the queue, that many messages and send them down to you. And so, sitting in your TCP buffer, on your machine, are going to be that many messages for your client to pick up and process if there are that many messages in the queue to give you. It is the fastest way to go.

A common mistake is to say, “Okay. I want you to send me messages as fast as possible, why don't you send me a hundred at a time?” Now, the problem with doing that is let's say that you're trying to parallelize, so you have a hundred consumers on a queue that have all said, “Give me a hundred messages ahead of time.” And then they can only process one message a second, you get into a very inefficient condition where you've pre-allocated too much and actually your throughput goes down pretty dramatically. Whereas, if you just said, QoS 1, because you can process one message a second, it's going to send you those messages as quickly as you can process them and get them into the pipeline. It's going to distribute them better so that you don't have aging messages sitting in the TCP buffer on your machine. It makes sense?

No Ack Mode

No ack mode. Technically, you might say this is this could be faster. But what happens in No ack mode, and I probably should have qualified this in the graph, is with a single consumer No ack mode is going to be the fastest way to consume messages. And that is RabbitMQ is just opening the firehose and sending you messages as quickly as it can, as quickly as it comes into the queue. They're going to sit in your TCP buffer. The problem with that is, unless your applications have some sort of really, really awesome way of dealing with understanding what data is available to it, before it ever gets to it, which probably is not something that's possible. If you were to segfault, your application were to be restarted without having some sort of graceful way to tell Rabbit, “Hey, I don't want to receive messages from you anymore” and then wait to ensure that all your messages have been processed, you're leaving messages in the TCP buffer and they're gone, so you've lost data

On the other side, consuming with acknowledgments is going to be slower. QoS, you're not worrying about it. You're just saying basic consume. You're receiving the messages. You're acknowledging them. And you're just getting them one at a time, as they go through. Transactions are even slower. But again, getting is the slowest.

Really, low-level protocol stuff which you don't really think of as an application or you shouldn't have to think of as an application developer. But as a rule of thumb, when you're working on these applications in production, from an operational perspective and figuring out what's going on, you start to just kind of understand the behaviors from this perspective even if you don't know why.

How will you use RabbitMQ?

Moving on from the low-level part of the protocol, I want to ask the question, “How will you use RabbitMQ?” I'm sure most of you are familiar with the term “not invented here” syndrome.

Basically, when you think about how you use RabbitMQ and the AMQ model and the way that you deliver messages, there are multiple paths that you can go down. There is the do-it-yourself method. And I think this is probably one of the more popular ways to use RabbitMQ. Frankly, this is the same type of thing that happens within the HTTP stack with web developers.

We have a protocol that has explicit contracts that allow us to define behaviors. We may bypass that and say, “Hey, I've got a message body.” And so, in the message body I'm going to give my other application all the information it needs to be able to know what to do with the message body and the message content itself.

The same type of thing happens in HTTP where you don't embrace, for example, status codes in your responses beyond 200 or 404 and not realize that, for example, 429 is used for rate limiting. You may just have, “I'm going to return a 400 error to you.” And in my JSON response, I'm going to have an error that says, “You've been rate limited.”

Use RabbitMQ and DYI

The same thing, I've seen in multiple RabbitMQ environments, where we may have a JSON and payload that provides context around the message and the message itself. So, as the picture kind of says you end up building a tow truck that's sitting on top of another tow truck when RabbitMQ provides all that that for you.

When I'm working with developers and talking about how to use RabbitMQ, I'm oftentimes saying, “Hey, use the model. Don't look at the message properties. Figure out how you can combine exchanges, and routing keys, and message properties to provide the context to your messages so that the message body itself is as terse or limited to the content you actually care about at the message level and go from there.

Use the AMQ Model

It's really interesting, this was a debate that I had very early on at MeetMe with my VP of Engineering. I wanted to embrace the model and use properties to describe the message and he wanted the flexibility to move away from RabbitMQ, if we wanted to or needed to, to something else. And so, his perspective was we take and we put everything in the payload and our applications then understand how the contract of the payload works to determine how we do messages, what the content is, when the message was created, what it's supposed to be used for. My perspective was, “Let's use the message properties for that because it's more efficient.”

Binary serialization and deserialization in AMQP, in all of your client libraries, is more efficient than JSON decoding, if you're using JSON as your message payload body which I think is probably the most popular thing to do.

Who has any unusual or non-JSON based bodies that are things like images? I use Avro datums for my payloads. Basically, JSON or other serialization formats inside non-contract-based serialization formats, inside the payload body, mean that you're consuming applications and you're publishing applications have to obviously serialize, deserialize those as well. There may be more efficient ways to get at that.

AMQP Model with RPC

There are multiple ways to use RabbitMQ, obviously. One of the ones that is very obvious is to use it for your application’s own RPC. How many people use RPC within Rabbit, explicit RPC? When you think about making your explicit RPC, I've highlighted in green all of the message properties that you should be thinking about using. For example, app-id, perhaps your consumer applications for your RPC care about where the message is coming from as part of their decision process, as part of the logic of what's processing the data.

Content-encoding and content-type are pretty obvious. I highly recommend, even if you have an implicit contract in your publishing, that embracing content-encoding and content-type is a very good way to future-proof your application. Content-encoding is basically saying, “I have compressed content. You know it's gzipping, compressed, or bzip, or whatever it might be. Content type being the mime type of the data as its serialized or if it's text or whatever it is.

Correlation-id. How many people are using correlation-id's? Really useful when you're trying to debug your systems.

Expiration and the idea of an RPC, by the time that the remote consumer that's processing and doing the work receives it, it may be too late. By setting an expiration, you can let that consumer know to throw it away.

Message-id is really important when it comes to RPCs because you want to be able to coordinate the response back and know that you're receiving back the right message in a highly concurrent environment so you can correlate those things. And reply-to. So, obviously, really important.

And if we come through here, we look at this diagram here. I'm not using the built-in-- I forget, is it called fast reply-to? There's a built-in reply-to behavior where replies don't have to go through a queue. Obviously, I'm not talking about that but we have this idea of a publisher and a consumer that is generating an RPC message, publishing to an exchange. It goes into a worker queue. Again, we have these combination consumer publishers that are processing and doing our work, sending it back to a response queue. We're going to use the reply-to header to say what that response-to queue is going to be.

Keeping track of the timestamp of when the message itself was published is important. I would caution against the use of timestamp to describe data within the payload and instead, kind of contrary to what I've been saying about everything else, I would use this as an attribute of the message.

Type. Really important. Even if you're implicitly relying on behaviors in RabbitMQ of saying, “Hey, I know it's made it into this queue over here, so this is the type of RPC that I am receiving.” Having this data from either debugging, or historical purposes, or just validating from a consumer perspective in case of operational misconfiguration is really useful. You can interrogate this. And when you receive a message and say, “Hey, you know what, somebody sent me an RPC to do something and I'm not the type of consumer to do that, so I'm just going to discard this message or I'm going to take some sort of alternate behavior.”

And, finally, user-id. Like app-id, really useful if you want to be able to interrogate those to determine if you are allowed to do the thing that you want to do.

AMQ Model with Message Firehose

This is the way that I prefer to use RabbitMQ. How many people use it more like a firehose of messages or events where you're just kind of publishing, and you tack on all sorts of different consumer types to the same messages? A few of you. Okay.

Again, we have these properties up here. The same things apply to what I was saying.

Coming over here, in this concept, I may have different things coming in from my various publishers, in this case, related to images. By using the routing keys and binding keys, I can multi-purpose these messages. Having a new profile image could do all sorts of cool stuff, give us our facial recognition, hash the image so that I can look for abusive patterns or that sort of thing, and store it in the case of something like Facebook.

And then I might - all the way down here, just want to audit everything that's going on in my system. And so, I could just say, “Give me everything related to images. I'm going to audit them. I'm going to stick them in logs or something like that.” It really allows you to multi‑purpose that thing coming into RabbitMQ in an efficient way.

Common User Problems

Common user problems. And all these, somewhat, come down to not understanding AMQP. Who's had problems with heartbeats? I know it's more than two or three of you. Probably, you're using the Python library or the PHP library, I would imagine. That's because heartbeats are bi-directional RPCs. Single-threaded applications that are doing work after they've received a message can't respond to the heartbeat requests coming from the server.

if you don't know the protocol and you don't understand that, what you'll end up with is a consumer application or a publisher application that is doing just fine. It's going. It's doing all that stuff and then it disconnects and you don't know why. Maybe, it disconnects at opportune times like you're in the middle of converting a very big video to a different format or something like that.

Not understanding the throttling of messages. You're publishing, publishing, publishing. You've got this application that basically is like a super high request rate application that's sending a message on every request to RabbitMQ. You're not letting the client library breathe in a single-threaded application and you get disconnected or throttled. Somewhere along the line, RabbitMQ is trying to say, “Hey, slow down. You’re using too much memory. Or, you’re publishing too fast in relation to how fast you're consuming and you're using too many resources.”

There are multiple ways for RabbitMQ to respond to that. The first way, these days, is to send you a connection blocked RPC. Your application is going to receive a frame from RabbitMQ that says, “Hey, you're publishing too fast and I'm blocking you from publishing.” But if your application’s not breathing, if it's not listening for what Rabbit’s telling you, you're never going to know.

Ultimately, the emergency brake in that scenario is TCP back pressure where RabbitMQ just stops reading from the socket that you're sending data to it in. That's not intuitive. What ends up happening is your application ends up locking up or having some sort of poor performance-related behaviors.

Not understanding that bi-directional nature of the RPC protocol between the server and the client, and the fact the server is going to send commands or invoke methods on your client, if you're not thinking about that, you can run into weird edge cases that you weren't expecting.

Another fun one, which I mentioned before, is channels. One of the interesting things of supporting client library in the open source world is the number of support requests you've received from people who are relatively new to using RabbitMQ and saying, “Hey, I'm trying to write this multi-threaded application or whatever it is and I've got 50 channels open, doing all these different things. I'm either getting poor performance or application behavior. That it’s hard to figure out what's going on.”

In essence, it's too complex. Simplify it. Bring it down. Use a channel - one channel per connection, period. And when that closes, open another one.

If you want to have some level of concurrency in your application, maybe you need to open multiple connections but beware because connections and channels have overhead in RabbitMQ. This goes down to understanding how RabbitMQ is written and the fact that it's written in Erlang. While highly efficient, every channel, every connection is a process within the RabbitMQ server in the Erlang VM. And so, as you're opening them and closing them, you're basically using resources on the server side. And so, if you have more than you actually need to do the work that you're doing, you're just making Rabbit work harder.

And then you have slow performance due to poor choices. One of the things that happened when I first came into AWeber was they had a RabbitMQ cluster. They were using a Python stack. They were using my client library that I had written. They couldn't figure out why it's so slow.

They're like, “Gavin, you know RabbitMQ. Hey, you know this client library, your stuff sucks. it's so slow.” And really, I'm like, “Well, that doesn't make sense because I have applications that are doing tens of thousands of messages per second and they're not slow.”

It turned out it was because they didn't understand the ramifications of delivery-mode to, like I was talking about earlier, where RabbitMQ was persisting every message to disk. They're thinking, “Hey, these messages are really important. And so, we're going to have a 3-node Rabbit cluster running on virtual machines with 20 gig hard drives, and 8 gigs of RAM, and four processors. We're going to publish 3000 to 4000 messages per second with highly available queues where it's synchronized across all three nodes, and we're going to write them to disk.”

Of course it's going to be slow. You're asking Rabbit to have multileader replication with persisted rows. Not much you can do about that other than say, ”Hey, where's the level of message deliverability guarantee that I care about?” and go from there.

AMQP Problems

I talked about how knowing RabbitMQ or groking RabbitMQ is to know AMQP. It's really important, in knowing AMQP, that there are warts. I happen to both love and hate the protocol. I think it's really good in in many ways.

If you go to the Rabbit site, you'll see there are 30 items in 0-9-1 errata page. That includes logic errors in the spec, incompatibilities between the versions in the low-level marshaling of frames, behavioral differences.

One of the things that's really tough-- or at least used to be, was dealing with authentication failure issues because you would connect and as “specced”; RabbitMQ or any other AMQP broker, if you fail to authenticate, it just closes the connection. And it doesn't even have an authentication failed exception response to that closing. It just closes the connection at whatever particular frame part of the sequence it was in that you're trying to establish it.

In the Pika library, I created an exception called probable authentication failure which was to say that, “I think this is probably an authentication failure because of where, in the startup sequence, it occurred but I don't know because it can't tell me.” Fortunately, the RabbitMQ team added a capability and an exception code that will explicitly tell you that.

Going back, a lot of what you heard me talk about was that bi-directional RPC nature. I think, ultimately, the fact that you can multiplex and do multiple things on a connection at any given time is somewhat of the root of most of the problems with AMQP 0-9-1. And so, the responsibilities of a client really come in there.

RabbitMQ Extensions

With those warts, we look at what RabbitMQ has done to address those. And that's really cool. AMQP is extensible which is awesome.

Federation

First is Federation. It's important to think about it. You think about the features in RabbitMQ and they're building on top of AMQP. When you think about the behavior federation, it’s implementing the AMQ model and just has built in consumers and publishers that are working underneath the hood to do the things that you're doing. With federated exchanges, we basically have an upstream queue, that's an internal queue declared by RabbitMQ, that's bound to the exchange. And so, your publishers are going into that upstream queue. And then, you have the federated publisher that's consuming from that queue and publishing to the exchange on the other side. That's important to say I've boxed these off but this guy can live here or here. That's the case with all of these.

If you have federated queues, it's the same. It's the AMQ model. We publish into the upstream queue. If I have a consumer hanging off this queue over here, we basically have this consumer publisher that connects to the upstream and consumes messages and publishes them into that queue.

In essence, you could write this stuff yourself. It's the same type of things that you're writing when you're writing publishers and consumers. It just happens to be built in.

Shovels

The same is true with Shovel. These diagrams are not terribly different but the behaviors of how they work are slightly different. If you're going to shovel to an exchange, you basically have your source queue and a consumer publisher built into RabbitMQ that's publishing to the exchange on the downstream side or the destination side. If you're doing a queue, you basically have your source queue and you have that consumer publisher that's going to that queue on the other side.

Built into Rabbit, maybe mysterious because you're just seeing it in the UI or you're seeing it from the command line but, ultimately, it's just like your applications. They're just written in Erlang as part of RabbitMQ but doing the same exact things. Basic publish, basic ack.

Protocol Plugins

Protocol plugins. This might be surprising to some of you. How many of you have used protocols other than AMQP with Rabbit? MQTT, AMQP 1.0. Underneath the covers, the plugins are just translators that are taking whatever protocol you're talking, whether you're publishing methods, or publishing messages, or consuming messages and they're turning it into AMQP 0-9-1 with an internal client and talking to it. Surprise, anybody? No?

AMQ Model and Protocol Extensions

And then there's all these awesome connections. I don't have a lot of time left so I'm going to skip over reading through the list. But, basically, the RabbitMQ team extended the AMQ protocol, adding new classes and methods. For example, publisher confirmations the confirm class is an extension to the AMQ protocol.

Basic.Nack is a correction of the fact that you can't multi-reject the same way you can multi-ack so they added a basic.nack method that allowed you to negatively acknowledge multiple messages.

AMQ Model and Protocol Extensions (Continued)

As I mentioned before, authentication failure notifications. All of the things. All the RabbitMQ‑specific stuff. These are extensions to the protocol itself, or the spec, or the methods. They're features within Rabbit but they build upon the AMQP.

Architectural Impact of Embracing AMQP

Finally, we'll talk about the architectural impact of embracing AMQP. When you move beyond this idea of Rabbit as a message broker and I'm going to shove all my stuff in the message and let my applications sort it out. You get to having simplicity, extensibility of your messages using them for multiple purposes. You get some robustness in your application framework and flexibility in use.

Use of Message Properties

Using message properties. Basically, if you declare, instead of implicitly relying on application behaviors, that you're compressing them or whatever it is you're doing, from an encoding perspective. You're specifying the content type. You're allowing consumers of those messages no matter what the consumer is - something written in Go, Erlang, Python PERL, PHP, Ruby. Whatever it is, it should be able to know, by looking at the content type, explicitly how to deal with the way that the content is serialized or stored within the message body.

Type tells those consumers information about the message that it doesn't have to define/divine from the message body itself. You get traceability and ease in debugging by using Correlation-ID and Message-ID. This idea of logging and being able to look across logs of all of your distributed applications and being able to correlate behaviors or a process by those IDs.

Finally, better debugging. If you don't use app ID today, I implore you to go back to your applications and do it because two to three years from now, when you're trying to do some sort of upgrade to a process and you don't know where the message is coming from, you're going to kick yourself. App-ID is extremely useful from that perspective.

Message Flexibility

Finally, by embracing this, you get flexibility in your messages. You can use them for multiple purposes. If you're not thinking about your messages beyond the scope of whatever it is that you're doing today, you're selling Rabbit short.

One of the things we do at AWeber is we think of these as events. What happened in our ecosystem of our application? Not only do those events get used by consumer applications to do the tasks that we need to do, they also get streamed into a data warehouse. Now, we have this entire warehouse of an ecosystem of everything that's happened in our environment that now we can use for Business Intelligence, or whatever other types of analytical purposes we want to do, or recover from failures because behaviors happen in our environment that we weren't expecting.

RabbitMQ in depth by Gavin M. Roy

This is my book. I hate to be the show but I think my publisher would be really mad if I didn't mention it. I go into all the things I talked about at a lot deeper level. I think it's a pretty quick read, so I recommend that you pick it up. Thank you.

Questions from the audience

You showed a plugin for the server 1.0, I just wonder what the 1.0 actually solves any of those problems and why Rabbit doesn't support one?

It was supported to by way of a plugin. It's a different protocol. It's rather unfortunate that they called it AMQP. I wish they would have named it something else because, inherently, if you look at the lineage of the AMQP protocol, it's quite different in its definition. And it does solve. Probably, the biggest problem in that it's not as lenient in that whole multiplexing, putting multiple behaviors at any given time onto a connection, but it also doesn't implement the model in the same way.

In essence, right now, the identity of RabbitMQ is this protocol. I think, and I obviously can't speak for the Rabbit team, but the things that I've seen them do as far as embracing and extending, that may not always be the case but that's where we are today.

When do you see RabbitMQ is the right technology for event versus something like Kafka?

For me, the flexibility that I gain from RabbitMQ, that I don't have in Kafka, makes it the right thing - that multi purposing and explicit contracts. Now, admittedly, I have limited Kafka use. But the fact that I've got this very flexible model in RabbitMQ and Apache Kafka itself is a little more rigid in that sense, it seems better to me. So, for example, the way that we do our warehousing is we route all of our events to a single queue or a single set of sharded queues. And then, we have one consumer application with a number of connections that listen off of that thing. And then, we have different other applications that are connected to other routed applications.

How do you manage your AVRO schemas?

We have, basically, a git repo and a web server docker image that gets published and has those schemas. And in our applications, we use content type to specify that it’s AVRO. And we use the type field to specify the schema name and version number that we're going to retrieve. And so, publishers basically pull that in on startup. Consumers, dynamically, when they receive a message, will go look to make sure that it's a supported type, pull it down, and use that from a deserialization perspective.