Update 2021-03-01

With the launch of our new plans, we are have discontinued our 2-node offering for new clusters. We are now offering 1-node, 3-node and 5-node clusters.

Learn MoreCAP theorem

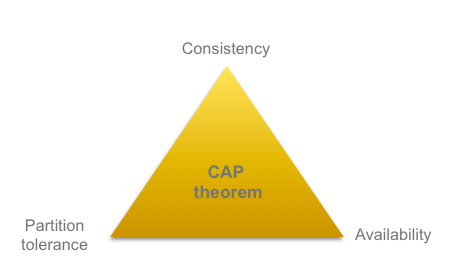

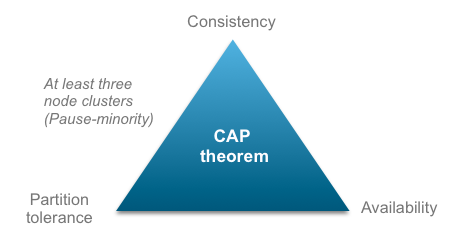

When talking about distributed systems (a collection of interconnected nodes that share data) it's common to mention the CAP theorem as well. The CAP theorem states that it is impossible in a distributed system to guarantee and simultaneously provide Consistency, Availability, and Partition Tolerance. One of them has to be sacrificed and you need to identify which of the three rules your application is trying to achieve.

Consistency means that data is the same across the cluster - all nodes see the same data at the same time. When getting data, it should be able to return the same data from ALL nodes.

Availability means that clients can find a replica of data, even in the presence of failures. The ability to access the cluster even if a node in the cluster goes down.

A network partition refers to the failure of a network device that causes a network to be split. Partition tolerance means that the system continues to operate despite network failures - the system will continue to function when network partitions occur.

Multi-AZ and Mirrored nodes

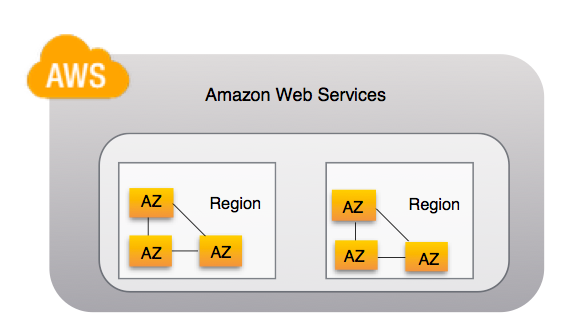

Multi Availability Zone (AZ) means that the nodes are located in different availability zones. An AZ is an isolated location within a region and a region is a separated geographic area (like EU-West or US-East). Multi-AZ is a standard setting for all clusters (with at least two nodes) for CloudAMQP instances hosted in AWS. More information about AWS regions and Availability Zones can be found here. If you need nodes to be spread across regions, we recommend you to check out the federation plugin.

Multi-AZ provides enhanced availability, the queues are automatically mirrored between availability zones. Each mirrored queue consists of one leader and one or more followers. Messages published to the queue are replicated to all followers. RabbitMQ HA is used to handle this, check out RabbitMQ HA for more information.

Pause minority

Protects data from being inconsistent due to net-splits

Pause minority is a partition handling strategy in a three node cluster that protects data from being inconsistent due to net-splits.

If both partitions in a 2 node cluster accepts messages during a net-split and the nodes rejoin again (after the net-split) the messages of the partitions (the node) with the least amount of connections will be discarded and it will instead resync with the other partition. Messages that were published to that parition, but were not yet consumed from it, will be lost. Pause minority is to protect against data inconsistency due to net-splits.

Pause minority: When a minority node detects that it's no longer connected to the majority of the nodes in the cluster it will pause it self - pause the minority. The paused node won't accept any new messages until the connection is established with the other nodes again. See partitions for more information.

CloudAMQP Setups

When you create a CloudAMQP instance you get the option to have one, two or three nodes (standard is two nodes). The cluster will behave a bit different depending on the cluster setup. It is not always easy to decide how many nodes you need for your system.

Generally designers cannot forfeit partition tolerance in the system, and therefore have a difficult choice between Consistency and Availability. In a system that needs to be highly available there are a number of scenarios under which consistency cannot be guaranteed and for a system that needs to be strongly consistent, there are a number of scenarios under which availability may need to be sacrificed.

When we spin up instances we use different underlaying instances depending on data center and plan. If you are interested in the underlaying instance for one of your specific instances, just send us an email or enter the chat.

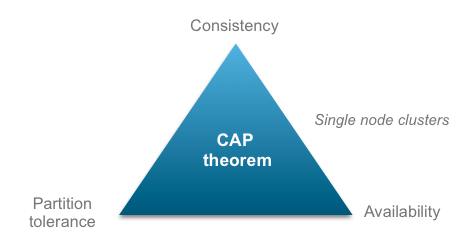

Single node

Dedicated server - Leader/standby failover

When you create a CloudAMQP instance with one node you will get one single node with high performance. Our monitoring tools will automatically detect if your node goes down and if that happens, it will bring up a new instance and the same disk (EBS) will be remounted on the new instance. This is called leader/standby failover. The new node will be located in the same AZ. If you set the queue to be durable and send the messages with the persistent flag, the messages will be saved to disk and everything will be intact when the node comes back up again.

In terms of the CAP theorem: Partition tolerance will obviously be sacrificed. Network problems might stop the system.

A single node instance will give you high performance with the cost of availability.

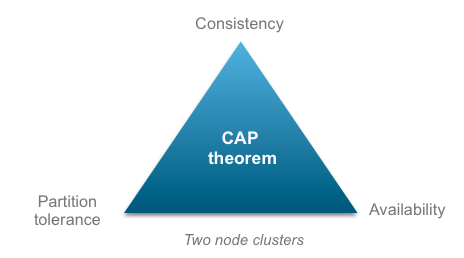

Two nodes

Dedicated server - Availability HA cluster, Multi-AZ cluster, Mirrored nodes

When you create a CloudAMQP instance with two nodes you will get half the performance compared to the same plan size for a single node. The nodes are located in different availability zones and queues are automatically mirrored between availability zones. You get a single hostname to the instance, that hostname will give back the IPs for all nodes in the cluster so if one node fails the client is already aware of the other nodes and can continue there.

In terms of the CAP theorem: Consistency might be sacrificed, clients may read inconsistent data. Nodes could remain online even if they can't communicate with each other and they will resync data once the partition is resolved. If both nodes accepts messages and they rejoin again after failure, the messages of the partition (the node) with the least amount of connections will be discarded and it will instead resync with the other partition. Messages that were published to that parition, but were not yet consumed from it, will be lost.

A two node cluster will give you high availability with cost of performance (compared to a single node) and data consistency.

Three nodes

Dedicated server - Availability HA cluster, Multi-AZ cluster, Mirrored nodes, Pause minority

When you create a CloudAMQP instance with three nodes you will get 1/4 of the performance compared to the same plan size for a single node. The nodes are located in different availability zones and queues are automatically mirrored between availability zones. You will also get pause minority - by shutting down the minority component you reduce duplicate deliveries as compared to allowing every node to respond.

You get a single hostname to the instance, that hostname will give back the IPs for all nodes in the cluster so if one node fails the client is already aware of the other nodes and can continue there.

In terms of the CAP theorem: Consistency is more expensive in terms of throughput or latency to maintain than availability. You will with three nodes get consistency but the clients that are connected to the paused node will not have "availability" in case of a net-split. There is a risk of some data becoming unavailable.

Further reading

Further information about RabbitMQ failure modes and recommended reading can be found on RabbitMQ partitions page, on RabbitMQ reliability page and on the API guide about recovery.

Please email us at if you have any suggestions or feedback.