The adoption of the microservice architecture is on the rise - you’ve probably seen it thrown around. The increasing adoption of this architecture is mostly driven by the numerous benefits it offers - improved scalability, flexibility, and faster development cycles.

Microservices provide a powerful framework, and building each service in the architecture is usually the easiest part. But most times, these services do not exist in isolation. So you need to coordinate and get them to communicate. And this is where threads of complexity start to unravel.

As far as communication in microservices goes, two popular approaches grace the stage: synchronous https and asynchronous lightweight messaging. In theory, https-based microservices might appear more straightforward to implement due to their familiar request-response nature. However, in reality, they have some major limitations.

In this 2 parts blog series, we will interrogate some of the drawbacks of https-based microservices and explore how message queueing can address these challenges.

- In this part, we will look at the challenges around tight coupling in https-based microservices.

- Then in part 2, we will explore the challenges around load balancing and service discovery in https-based microservices.

To help you get a better picture of these challenges, we will explore them by considering a use case.

Stay with me…

Imagine a retail store application built using microservices, where each service focuses on a specific task. Let's break down the steps involved when a user places an order:

- Order Processing Service: When a user places an order, this service takes care of handling the order details and initiates subsequent actions.

- Payment Processing Service: Before an order is considered complete, the payment service steps in and processes the payment for the order.

- Inventory Service: Once an order is placed, the inventory service comes into play. It updates the product details, ensuring that the stock levels accurately reflect the order.

- Shipment Service: Once the order is complete, the shipment service takes over. It processes the shipment to the customer, ensuring timely delivery.

- Notification Service: The notification service plays an essential role in customer communication. It sends email notifications to the customer, providing updates on the order status.

In summary, when an order is processed by the order processing service, it triggers a chain of actions: processing payment, updating inventory, initiating shipment, and sending email notifications.

Now let’s explore the drawbacks of the https-based microservice in the context of this use case we just described.

Tight coupling in https-based microservices

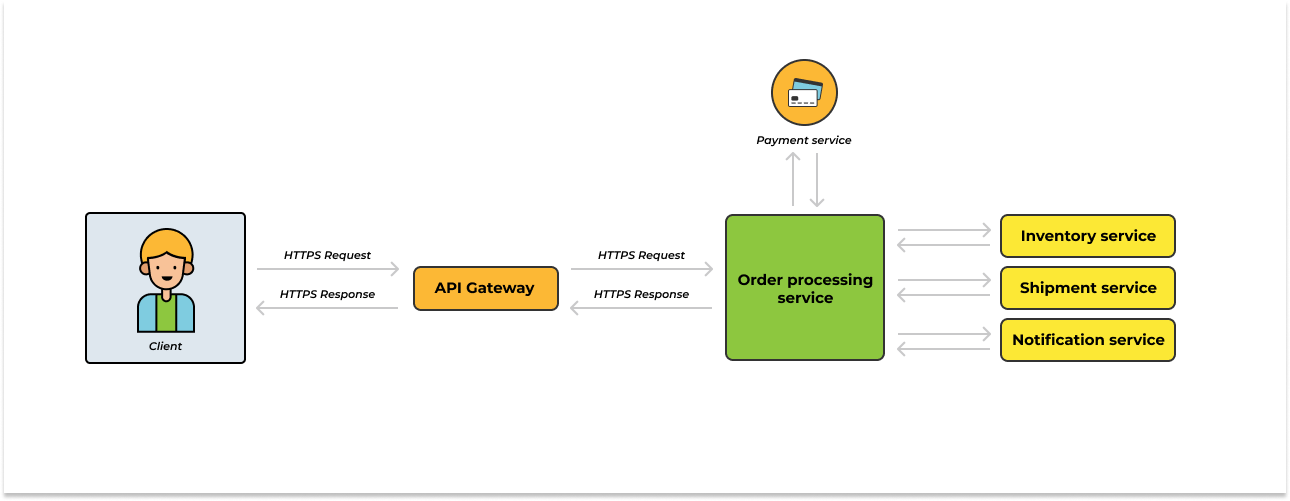

Our https-based retail store application will look like what’s shown in the image below:

Figure 1 - https-based retail store application

In the image above, tight coupling arises due to the synchronous nature of https-based communication between services. This is true because https-based communication predominantly follows a request-response model. Every request made by one service expects an immediate response from the receiving service.

In our case, the completion of an order is dependent on the payment, the inventory, the shipment and the notification service. The order processing service has to wait for responses from these services before confirming an order. Overall, the tight coupling occurs because the order processing service cannot continue without receiving immediate responses from all the other services.

This tight coupling in turn leads to some drawbacks in the https-based microservices:

- Inability to isolate faults: When tight coupling exists, a fault or failure in one service can have a cascading effect on other interconnected services. For instance, since the order processing service expects an immediate response from the services it is connected to, a failure or bug in any of the services will halt the entire order processing flow. This in turn leads to a breakdown in the overall system functionality.

- Long response time: Tight coupling requires services to wait for immediate responses from others, leading to longer response times if delays or unresponsiveness occur. In our case, delays in payment, inventory, notification, or shipment services directly impact order processing. Consequently, end users may experience frustration due to slower order processing times.

- Inflexibility in introducing changes: Consider a scenario where additional action is required during order processing, such as detecting fraudulent transactions in real-time. If we implement this feature as a separate microservice, it will require an update to the Order Processing microservice to invoke the new microservice. Consequently, code modifications and redeployment of the Order Processing microservice become necessary to consume the features offered by the new microservice.

As mentioned earlier, the drawbacks above stem from the inherent nature of the HTTPS communication model, which assumes that every request triggers an action that must be completed immediately, leading to synchronous actions.

In reality, not all actions require immediate completion - actions like that can be executed at a later time. However, the synchronous https-based communication pattern fails to account for this distinction.

Now, we are not dismissing the fact that there are certain scenarios where the synchronous https-based communication pattern will be desirable. For example, in our use case, payment processing is necessary to consider an order complete - in that scenario, it is more ideal to implement synchronous communication between the order processing service and the payment processing service.

On the other hand, inventory updates, shipment processing, and notification actions do not need to be completed before an order could be considered complete. As a result, it makes more sense to decouple these actions from the order processing flow.

In a scenario like the one above and many more like it, adopting an asynchronous communication pattern by planting a message queue between the services will be more advantageous.

Let me show you how…

Messages queues and microservices: Towards a more decoupled architecture

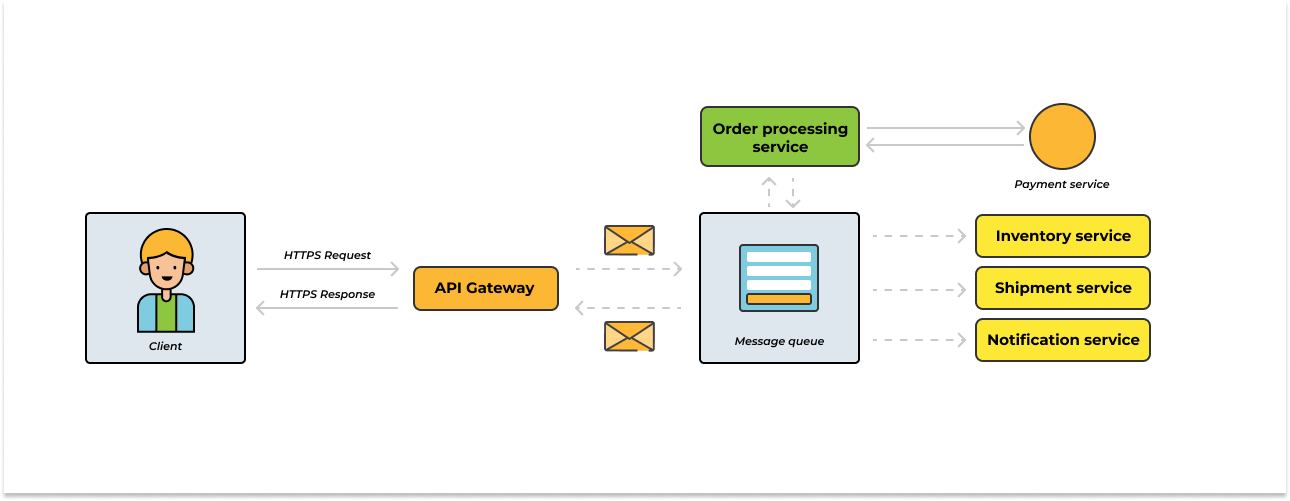

The image below shows what our architecture would look like when we replace https-based communication with message queueing:

Figure 2 - queue-based retail store application

In the image above, we replace https-based communication with a message queue. Message queues introduce the concept of decoupling microservices by enabling asynchronous communication. Instead of immediate responses, services can exchange messages without direct dependencies.

In the context of our use case, when an order is processed by the order processing service, it can enqueue a message indicating the need for inventory update, shipment processing, etc. Instead of directly invoking those services and waiting for their responses, the order processing service can continue its execution independently.

The message queue acts as an intermediary, holding the message until those services are ready to process it. This asynchronous approach allows services to operate independently and asynchronously, breaking the tight coupling that exists in synchronous https-based communication.

This decoupling in message queue-based microservices has some major benefits. For example:

- Fault isolation: By introducing message queuing in our architecture, we enabled asynchronous communication that decoupled the services. Because the direct dependency between the services has been eliminated, if a service encounters a fault, other services would continue processing requests independently.

- Reduced response time: With message queuing, services can asynchronously process requests without waiting for immediate responses. For example, when an order is processed, the order processing service can put the request in a message queue and proceed with other tasks. The inventory, notification, and shipment services can consume the messages from the queue at their own pace, reducing the overall response time and improving the user experience.

- Flexibility in change introductions: Message queueing promotes loose coupling between microservices, enabling flexibility in introducing changes or adding new functionalities. In our use case, if a new service, such as a fraud detection service, is introduced, all we need to do is bind it to the message queue and have it consume messages - we don’t need to update any of the existing services.

Conclusion

We have explored the drawbacks of https-based communication in microservices and how message queueing addresses these challenges. The tight coupling in https-based microservices leads to issues such as fault isolation, inflexibility in introducing changes, and long response times. However, by adopting message queues, we can achieve a more decoupled architecture that enhances fault isolation, provides flexibility in introducing new changes, and reduces response times.

Ready to start using message queues in your distributed architecture? RabbitMQ is the most popular message queueing technology and CloudAMQP is one of the world’s largest RabbitMQ cloud hosting providers. In addition to RabbitMQ, we also created our in-house message broker, LavinMQ - we benchmarked its throughput at around 1,000,000 messages/sec.

Easily create a free RabbitMQ or free LavinMQ instance today to start testing out message queues. You will be asked to sign up first if you do not have an account, but it’s super easy to do.