In the previous article in this series, we saw how message queues yield a more decoupled microservice architecture compared to HTTPS. In this part, we will take our exploration a step further.

We will explore the challenges around load balancing and service discovery in https-based microservices.

Again, to help you get a better picture, we will examine these challenges in the context of our use case from the previous part: the retail store application.

Load balancing and service discovery in https-based microservices

In the previous blog, we presented an idealistic picture of the https-based microservice - where there is just one instance of the order processing service receiving requests directly from the API Gateway. We had just one instance of the other services as well.

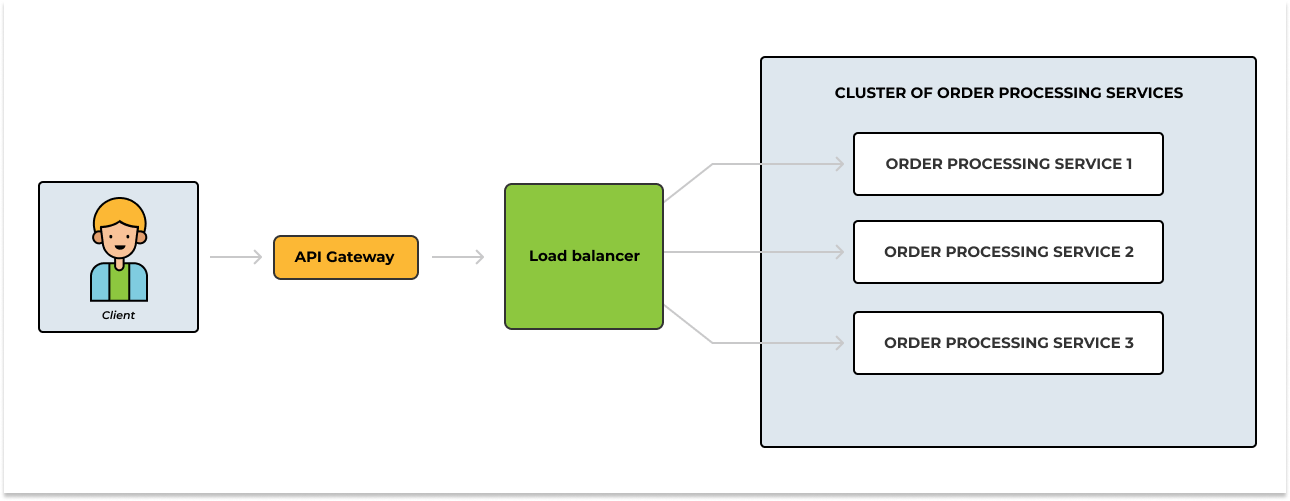

In realistic scenarios, you are more likely to have multiple running instances of a service. The number of running instances could be scaled up and down on the fly based on traffic. Having multiple instances of the order processing service, for example, creates the need to have a load-balancing mechanism that would evenly distribute requests amongthe instances.

Depending on your needs, you could implement an arrangement where an API Gateway works in conjunction with a load balancer or you could simply replace your API gateway with the load balancer.

In our retail store application, we care about separation of concerns, so we will have a standalone API Gateway that’s responsible for authenticating and authorizing requests, as well as throttling and caching. A separate load balancer will be responsible for evenly distributing requests among the instances of the order processing service.

The image below demonstrates what this new arrangement will look like:

Figure 1 - API Gateway and load balancer in a https-based microservice

Even though the image above correctly visualizes the communication between the API Gateway, the load balancer and the individual services at a very high level, some details were abstracted.

Like most modern cloud-native applications, our retail store application will run in a containerized or virtualized environment. And here is the catch:

- Each instance of the order processing service exposes some set of REST API endpoints at a particular location (host and port).

- Virtual machines and containers could have dynamic IP addresses - this implies having instances of the order processing service with dynamic locations.

- As mentioned earlier, the number of the order processing service could be scaled up and down on the fly. For example, an EC2 Autoscaling Group adjusts the number of instances based on load. In addition to that, the number of running instances could also change when some instances fail to start for whatever reason.

In essence; before our load balancer could evenly distribute requests among available service instances, it has to, first of all, know what service instances are available and where to find them.

More technically, we need a way to allow our load balancer forward requests to a dynamically changing set of ephemeral order processing service instances. And this brings us to the concept of…

Service discovery

As mentioned earlier, the number of instances of service in a microservice architecture is dynamic. Their locations could be dynamic as well. Service discovery is the mechanism you put in place to allow services to find and talk to each other - they need to.

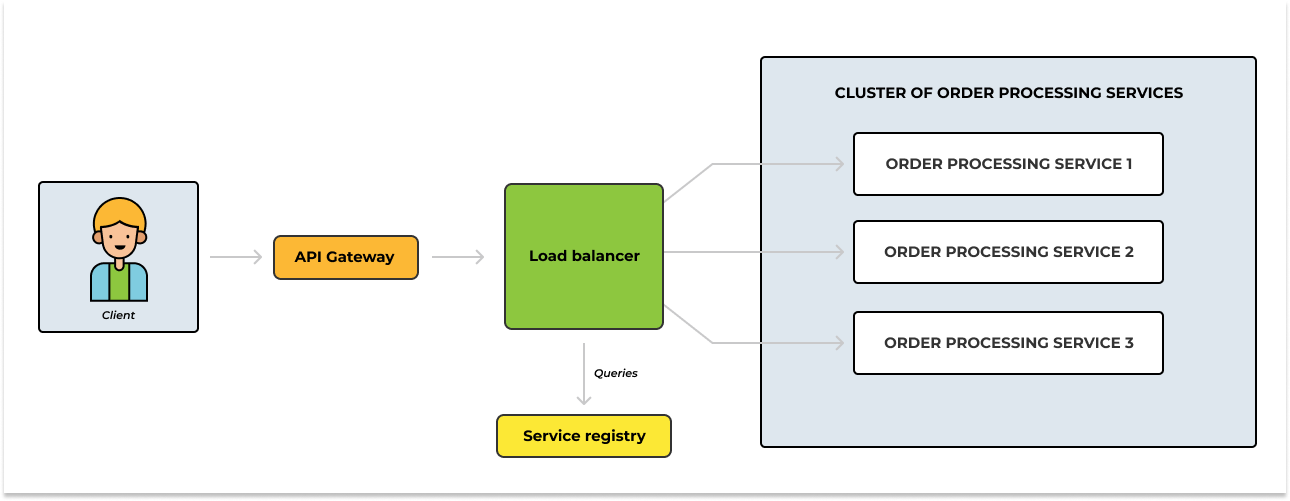

One way to solve this problem is to adopt the service registry pattern. You can incorporate a service registry into your architecture - functioning as a database, the service registry keeps track of every instance of the order processing service and their respective locations.

When a new instance of the order processing service starts up, it gets added to the service list, and when it shuts down, it gets removed. With this in place, our load balancer can know the available instances of the order processing service and their respective locations by querying the service registry. Additionally, the service registry can also check if a service is working properly by using its health check feature to make sure it can handle requests.

The image below throws the service registry component into the mix.

Figure 2 - API Gateway, load balancer and service registry in a https-based microservice

Throughout this piece, we’ve talked about how https-based microservices introduce the need for load balancing and service discovery. Just to be clear, we are not invalidating these approaches - they just require more work and attention to specific details. How so?

By limiting everything to the load balancer and the order processing service in our examples, we intentionally trivialized what this work would look like in reality. In more complex scenarios, you will need to implement this load balancing and service discovery mechanism for each service that we want to run more than one instance of at a given time.

If we decide to run multiple instances of the inventory service, then there has to be some load balancing and service discovery between the order processing service and inventory service.

Additionally, if we are going with the service registry pattern, then we’d also have to think about how these services need to be added or removed from the service registry. Ok, is there any alternative?

Well, I propose…

Messages queues and microservices

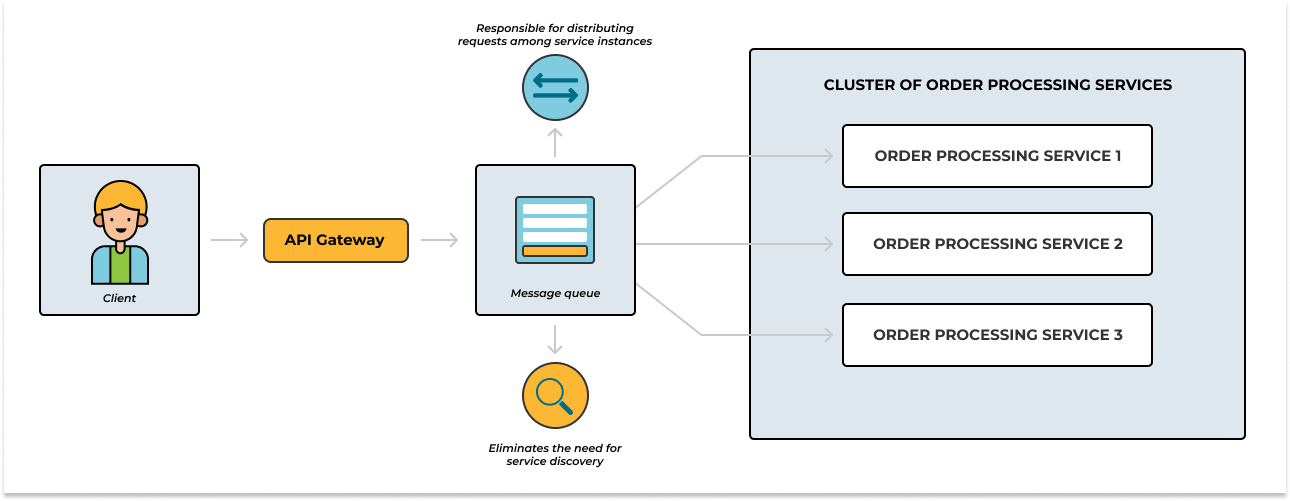

Service discovery is simplified in message-queue-based microservices. Instead of relying on external registries or service discovery mechanisms, the message queue itself acts as a central hub for communication.

Services publish messages to the queue and consume messages from it, without explicitly needing to know the location or address of other services. This removes the complexity of continuously tracking and updating service locations and allows services to be added or removed on the fly without disrupting the communication flow.

To publish or consume messages from a message queue, services only need to connect to the message queue using the queue’s relevant connection details.

On load balancing, when adopting the message-queue-based microservice architecture the need for explicit load balancing using a third party is also eliminated. Load balancing becomes an inherent part of the architecture.

When multiple consumers are bound to the message queue, in our case multiple order processing services, the queue will distribute messages among these instances as per your configuration.

Figure 3 - Message queue as load balancer

Conclusion

https-based microservices introduce the need for separate load-balancing mechanisms and complex service discovery. However, adopting message-queue-based microservices eliminates these challenges.

With message-queue-based microservices, the message queue itself is responsible for distributing requests among the instances of a service, eliminating the need for explicit load balancing via a third party. Service discovery is also simplified.

Ready to start using message queues in your distributed architecture? RabbitMQ is the most popular message queueing technology and CloudAMQP is one of the world’s largest RabbitMQ cloud hosting providers. In addition to RabbitMQ, we also created our in-house message broker, LavinMQ - we benchmarked its throughput at around 1,000,000 messages/sec.

Easily create a free RabbitMQ or free LavinMQ instance today to start testing out message queues. You will be asked to sign up first if you do not have an account, but it’s super easy to do.