The previous article in this series explored what Message Queues are. To build on that knowledge, this article will cover some practical use cases of Message Queues. To begin, let’s reiterate the core function of a Message Queue.

Fundamentally, Message Queues foster asynchronous communication between Producers and Consumers.

Producers and Consumers are essentially interacting systems that could be processes on the same computer, modules of the same application, or they could even be services running on different computers. This inherent ability of Message Queues to sit between processes or applications and allow them to communicate makes them fit for:

- Decoupling long-running processes from a live user request

- Use as the medium of communication between the services in distributed architectures

Let’s delve more into each use case.

Decoupling long-running processes from a user request

Traditional web applications execute requests synchronously by default. In user-facing applications, it is common to have a user request that triggers some long-running action. In such situations, this synchronous execution increases the response time considerably. But how, you might ask?

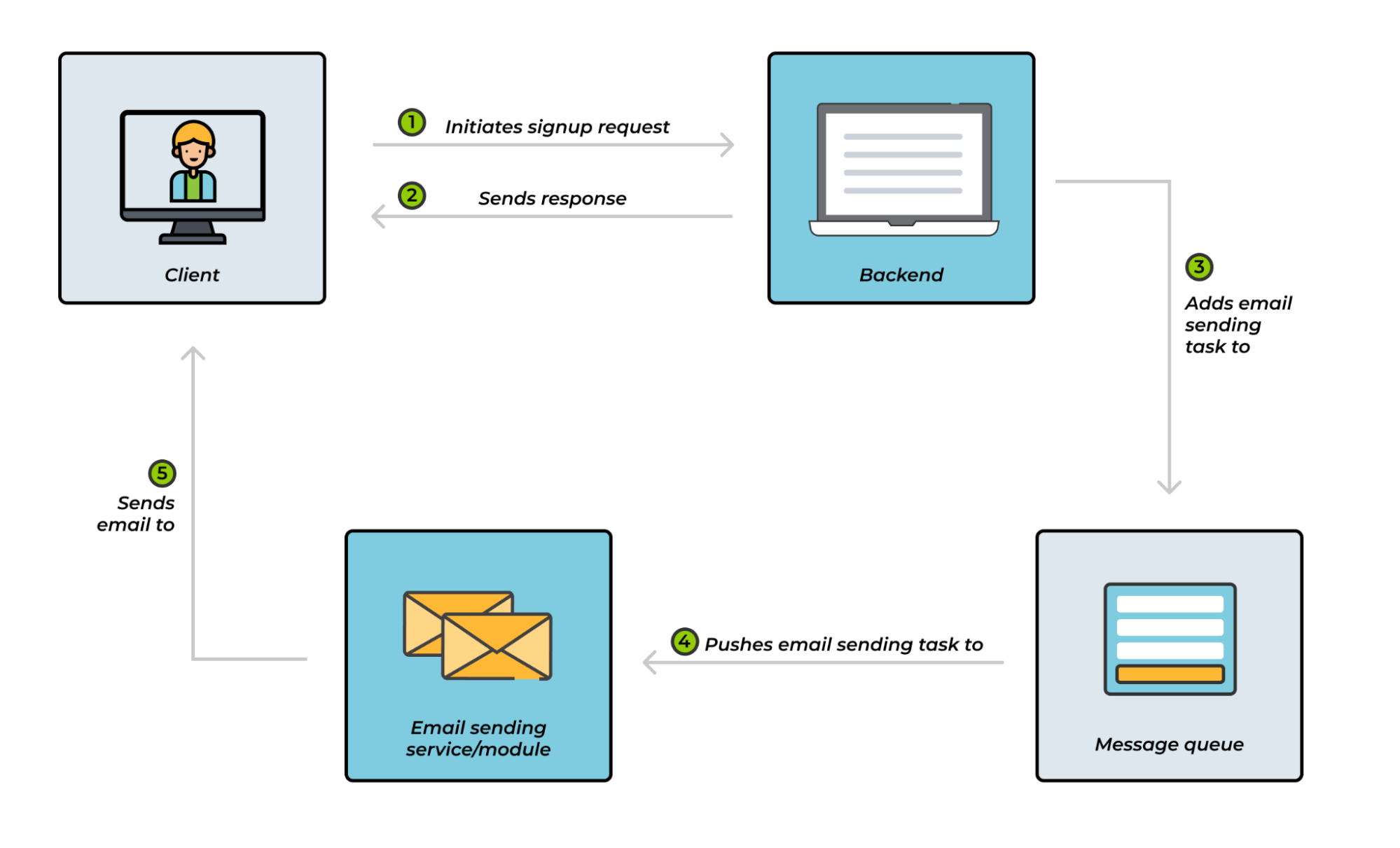

Let's take the case of a user signup flow where a welcome email has to be sent to the user. When a user signs up, the http request-response cycle will look like this:

- The user submits their data through a web form

- An http request is sent to the server

- The server validates the user data

- The server persists the user data in a database

- The server interfaces with an external service like Twilio to send the user a welcome email

- The server then sends a response back to the user: e.g, “your account has been created”

Because the execution is synchronous, the steps above are executed sequentially. As you’d imagine, because step 5 requires connecting to an external service and sending an email, this could take some time. This, in turn, raises the overall response time. While all these steps are being executed, the user may face a longer than desired wait for the response.

As you already know, keeping your users waiting for long periods is an easy way to lose their attention. This wait time could even get worse if thousands of users are trying to sign up simultaneously. In fact, some requests would eventually time out. But how can this problem be solved?

Notice how the result of the action that takes the most time (sending the email - step 5) does not even impact the response sent to the user? Thus, we could reduce the wait time dramatically if step 5 (sending email) is pulled out of the request-response cycle. We can do that with a Message Queue.

When users sign up, they get a response almost instantaneously, and the email-sending action is put on a Message Queue and processed at a later time. This introduces asynchronous processing as opposed to the initial time-consuming synchronous processing.

Figure 1 - Sending an email asynchronously with a Message Queue

Even though the example above highlights how Message Queues could be used to pull out the task of sending an email from the request-response cycle, we can extend this to similar scenarios.

Whenever there is a long-running process with a result that does not impact the response sent to the user, we can use a Message Queue to decouple that process from the request-response cycle and avoid potentially long wait times. Some common tasks that are great candidates for a Message Queue include image scaling, video encoding, search engine indexing, and PDF processing.

Message Queues in distributed architectures

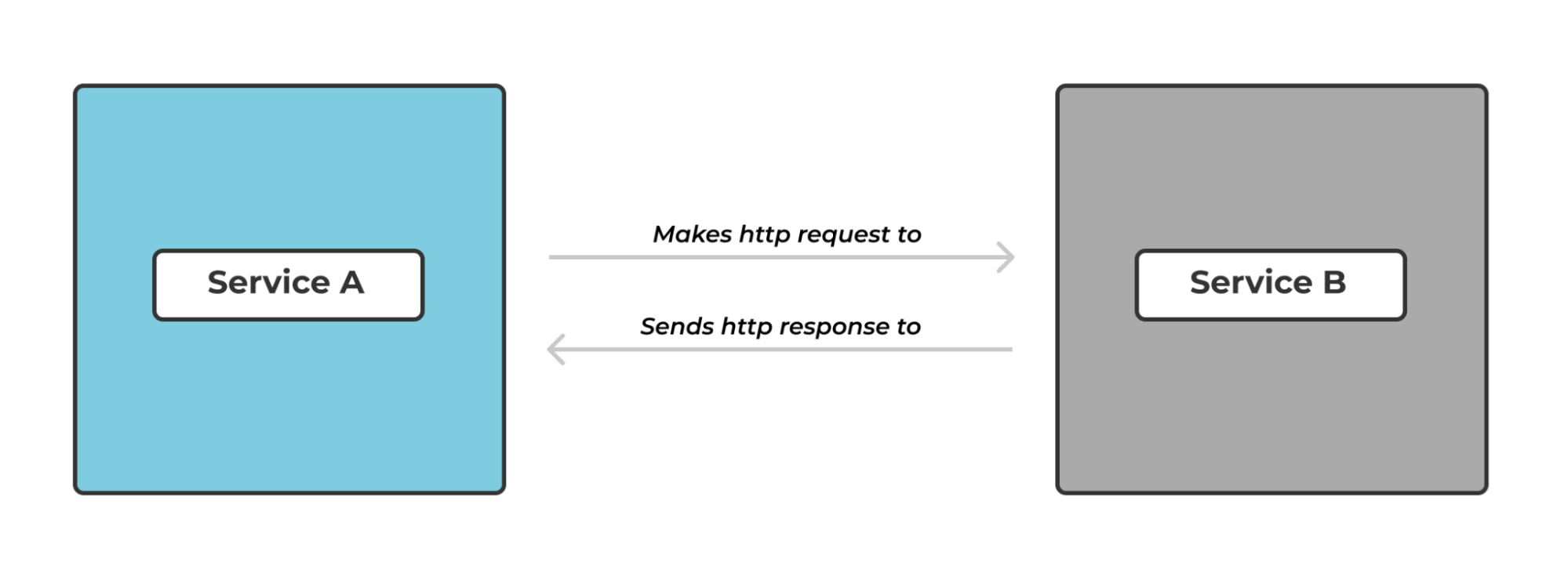

In a microservice architecture, it is common to have small services that communicate with each other. The implementation of how these services communicate varies across teams. For example, the easiest but possibly naive way is to have these services communicate synchronously via API calls. What does a synchronous API call entail?

Imagine you are working on a microservice architecture with two services: service A and service B. The communication between these two services would be synchronous if service A makes an http request to service B, waits idly for the response, and only proceeds to the next task after getting a response from service B.

Figure 2 - Synchronous communication between two services

While the pattern of communication above works, it is flawed in the following way:

Tight coupling

One of the goals with microservices is to build small autonomous services that would always be available even if one or more services are down– No single point of failure. The fact that service A connects directly to service B and in fact, waits for some response, introduces a level of coupling that undermines the autonomy that each service should exude.

This is true because

- If service B fails unexpectedly, service A would also be unable to serve its requests because it relies on the response it gets from service B

- If service A is experiencing a spike in traffic, this would also affect service B since service A forwards its requests to service B directly

But how can we manage these limitations?

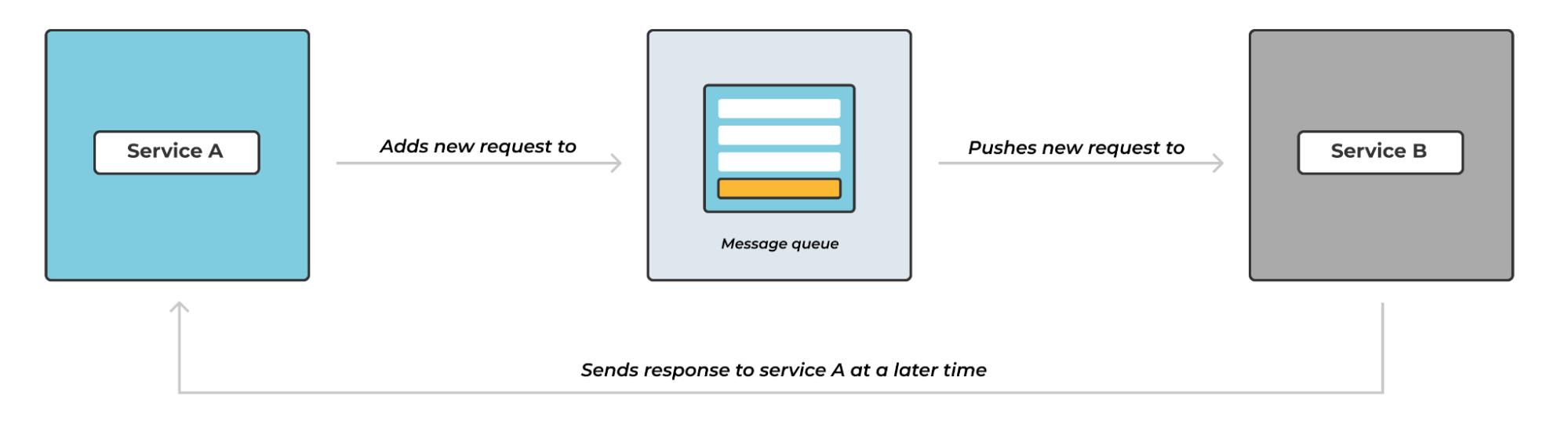

Message Queues would come in handy here. We could use Message Queues to introduce asynchronous communication between the two services. Instead of service A making requests to service B directly, we can introduce a Message Queue between these two services.

In essence, when service A receives a new request, it adds that request to the Message Queue. Service B then picks up the request and processes it at its own pace.

Figure 3 - Asynchronous communication between two systems

This approach decouples the two services and, by extension, makes them autonomous. This is true because:

- If service B fails unexpectedly, service A will keep accepting requests and adding them to the queue as though nothing happened. The requests would always be in the queue for service B to process them when it’s back online.

- If service A experiences a spike in traffic, service B won’t even notice because it only picks up requests from the Message Queues at its own pace

- Lastly, another added benefit of this approach is that it is easy to add more queues or create copies of service B to process more requests.

Conclusion

This article covered some of the use cases of Message Queues. The intrinsic ability of Message Queues to enable two or more processes to communicate asynchronously makes them fit for very specific use cases.

For example, we can use them to pull out long-running actions from the http request-response cycle and by extension, reduce response time. Additionally, we can also use queues as the medium of communication between the services in distributed architectures.

Even though this article presented two practical use cases of Message Queues, in this series, we will focus on working with Message Queues in a microservice architecture, and the next article in this series will explore RabbitMQ, one of the most popular Message Queueing technologies out there

For any suggestions, questions, or feedback, get in touch with us at contact@cloudamqp.com